| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Journal of the Computer Conservation Society

ISSN 0958-7403

|

Number 100 |

Winter 2022/23 |

Contents

| Society Activity | |

| News Round-Up | |

| Queries and Notes | |

| Chair’s Annual Report | Doron Swade |

| Resurrection – 100 Not Out | Dik Leatherdale |

| Simulation Modelling of Historical Computers[1] | Roland Ibbett & David Dolman |

| Data processing in the 1960s: The Commercial Union’s Exeter Computer Centre | Simon Lavington |

| Obituary – Kathleen Booth | Roger Johnson |

| Review – The History of Computing: A very Short Introduction | Martin Campbell-Kelly |

| 50 Years Ago .... From the Pages of Computer Weekly | Brian Aldous |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

Society Activity

|

EDSAC — Andrew Herbert Progress on EDSAC commissioning continues to make progress. Many of the problems that have plagued the Main Control, Addressing Coincidence and Order Decoding subsystems have been overcome and we have seen extended periods of the machine apparently operating correctly. We are still finding some problems with the tank addressing system, but these too are being removed one-by-one. We have also commissioned the operator control chassis which now gives us the ability to single step through test programs and brings us closer to how the original machine was used than our interim ad hoc controls. The next steps are to thoroughly test the arithmetic orders for correct operation and results, and to commission the transfer unit. This will allow programs to write to store and also enable calculation with short (17 bit) as well as long (35 bit) numbers. On a longer timescale remain:

A parallel development is the creation of a system for monitoring EDSAC to provide a means of detecting, analysing and hopefully locating machine faults. This is based on a microprocessor system that can monitor and capture individual signals, sending them back to a central station for analysis. These “EDLA” units have been designed by Jeremy Bentham. A new volunteer Brian Knight has started work on the central station, using a Raspberry Pi and Peter Linington is drawing up the overall system architecture. We are also reawakening a project to construct a photoelectric paper tape reader as was fitted to the original to Cambridge University, and thus when paper tape input of programs and data became a performance bottleneck. Overall we are finding EDSAC surprisingly reliable. To a significant degree this is due to a lot of hard work during the commissioning phase improving and tuning circuits to achieve better margins on critical signals. We do suffer valve failures from time-to-time, unsurprising as many have been in operation now for nearly 10 years. With experience we are doing better at being able to pinpoint such failures. Hopefully as we develop the monitoring system, we will be able to do even better. We have found that some of these valve failures do then cause consequential damage elsewhere – for example burning out resistors due to high current loadings. This can be difficult to track down. The Chinese B9G sockets we purchased given the dearth of originals also remain a tiresome cause of intermittent, often vibration-sensitive failures. If there are CCS members who would like to be involved in EDSAC commissioning the team would be very pleased to welcome them, especially those with electronics experience. Please contact me in the first instance. |

|

ICL 2966 — Delwyn Holroyd One of the 7181 VDUs developed a fault, although it has actually turned out to be two. The symptom was no vertical deflection on the display. Some investigation revealed the -15V power supply measuring about -1V. It was current limiting due to a short circuit capacitor on the character ROM board, where the supply is used to provide -9V for the ROMs. The other use for this supply is on the scan board, and the vertical scan returned as expected after replacing the faulty capacitor. However the sub-raster, or jiggle scan, did not. On these displays the characters are drawn vertically with a separate high frequency scan. A burnt out resistor was found on the scan board in the relevant circuit, but the replacement immediately started smoking! Unfortunately it appears to be a transformer fault, but this is preferable to the output transistors. These failed on the only other scan board we possess, and have so far proven impossible to replace. They carry only a house part code, so there is no clue to the characteristics. We’ve tried many different replacement types, but none have given a satisfactory character shape. The repair will most likely involve transferring the working output transistors to the otherwise good scan board. It isn’t clear how the power supply fault could have caused the scan board transformer to fail, or vice-versa, so this is also a mystery. |

|

Turing-Welchman Bombe — John Harper The bombe has been running reliably in the last months and has only had one small worn part replaced. As things are almost back to normal after the pandemic it is now time to report that the team of demonstrators has done extremely well over this period. Demonstrations have been possible almost every day that TNMoC has been open. At the beginning of the lockdown members of our team erected acrylic screens all around the machine in the hope that there were no infections between demonstrators and the visiting public. From what we know this was successful Our demonstrator team has to be congratulated. |

|

Software — David Holdsworth Server sw-pres.computerconservationsociety.org I am in the process of rationalising material on this server. It currently resembles a construction site, but it is evolving into a world where everything is on this server, and external links within downloadable files no longer point to my two other servers. At the end of the exercise, all the material that I have assembled and produced will be on the one flash drive, which I will then copy. All the links within the server will be relative, so that the copy flash drive can be mounted in any machine and run. It may be necessary to recompile all the C programs, for which a command script will be provided.

Free-standing Webserver Emulation System In many ways this is a starting point for the above server system. As requested in September, I have had help from Dik Leatherdale of issues of portability. This has revealed that offering binary programs for download onto Windows 11, and probably also 10 tends to excite the anti-malware systems, which then prove less than co-operative. For UNIX-like systems belonging to the sort of person interested in software, I have assumed that one can usually rely on there being a C compiler, normally gcc. This problem obviously has solutions. Otherwise nobody would ever be able to install anything. I suspect that there are many solutions, some of which involve paying money to trusted (self-appointed) third parties, though my ambition is that an end user would be able to have their own anti-malware software scan the downloaded stuff and pronounce it clean, after which it would work without let or hindrance. The snag is that things work OK on my own systems where they were created. I only have one Windows machine. At present the “free-standing” system is not quite autonomous. Although the emulations run entirely on the end user’s machine, it still makes calls to the sw-pres server for images of manual pages and original source text, This material is very bulky. If it were to be incorporated into the downloaded system, it might make the system unattractively large. Of course, I do not own the copyright in this material. I am not sure whether my HTML versions, created by use of OCR and manual editing, count as new works created by me. |

|

Elliott 803, 903 & 920M — Terry Froggatt The TNMoC Elliott 803 is going well at the moment. Peter Onion mentions that he has been working on how to render pictures with the plotter. Also a version of the two Algol Compiler tapes labelled “803 A504 Issue 1, 17/7/79” has come to light. He is not sure where/when he acquired these tapes, and 1979 seems very late for them to have been an official release. They have been transferred to disc using the 803’s Paperless Tape Station for investigation on an emulator. So far it looks like it is a version of 803 A104 (the compiler for five-hole programme tapes) that has bee modified to use a seven-bit code, but the output it produces (presumably error messages) doesn’t seem to match any of the known seven-bit codes. The next step is to examine the instruction trace from an emulator run, and to try and figure out how it uses the values from a 128 word lookup table that is referenced in the subroutine that has replaced the normal “060:710” instructions to read a character from tape. Peter is being helped by Bill Purvis.

Despite the previously-reported intermittent faults, the TNMoC 903 “Olly” also appears to have been behaving recently too. In contrast, my own larger 903 has failed recently, losing one word of the store in every 64. This is almost certainly due to corrosion inside a store connector, on a pin adjacent to a pin which I repaired two years ago. A proper repair (with a new connector) will involve 100 solder joints. Peter Williamson has traced the failure with the TNMoC 903 engineers display panel to one of the modules in its power supply and has a replacement on order. We are lucky here: originally the voltage for the neons was derived from the +/-six volt rails by an internal inverter, which might be hard to repair, but this has been replaced using relatively modern mains-powered modules. My conjecture in the previous Resurrection, regarding how the microcode in the 920M might be implemented, proved to be correct. I have now located all of the gates which implement it, and I am now close to understanding all of the gates on the register and control decks. (None of which will help me fix the store problems, of course). I’ve also found signs of evolution. On most 903s, a bistable has been added to turn initial instructions on & off. On the 920M (at position E47), it looks as though a standard 564 dual four-input Nand module has been replaced by a one-off 509 module which adds the bistable. The clue is that there is a now-isolated backplane link between two of the pins: with the general-purpose 564 this was needed between the two gates, but with the special-purpose 509 these pins have been linked internally. |

|

ICT/ICL 1900 — Delwyn Holroyd, David Wilcox, Brian Spoor, Bill Gallagher Bill continues to work on the 7930 scanner implementation, which has been modified to require proper sync run-in and SYN sequences. Currently problems are being experienced getting the 7020 bulk-read facilities to work (for Card Reader and Tape Reader). David is still working on the 7210 IPB. Both the exec mode (S210) and object mode (N210) diagnostics will run to a certain extent before hitting unexpected problems. David has decompiled N210 into source and is now adding diagnostics in the hope of finding where the emulation is going wrong. Brian has already received a support request following publication of the George 3 Executive Emulator article in edition #295 of “Linux Format”! (see linuxformat.com/linux-format-295.html). John Harper recently donated a large cache of 1900 manuals and drawings to the team, which Bill is working his way through scanning. A lot of the information is new to us, and will no doubt prove invaluable in future emulation work. Many thanks to John, and to Bill for the scanning. |

|

IBM Museum — Peter Short Current Activities Over the last few weeks we have been spending much of our regular Thursdays sorting through the various back office rooms, some of which have been undisturbed for years. We were able to dispose of a quantity of flat screen PC monitors and other hardware, where we already have at least a quantity of two. Another room full of posters, pictures, photographs and other miscellaneous items has also been cleared and tidied. There is much still to do, but we do have more storage space now for hardware that has otherwise been living on the floor. Complementary Bletchley Park Exhibition With the successful launch of Bletchley Park’s new exhibition “The Intelligence Factory” incorporating our 080 card sorter and a hand punch, we are in the process of preparing a complimentary display based around a 077 card collator and 416 tabulator. Many 405 tabulators, outwardly very similar to our 416, were used in Bletchley Park, as well as 077 tabulators, other ‘big iron’ card punch machines and a multitude of card punches. The Autumn edition of the Bletchley Park magazine, Ultra, includes an article co-authored by Dr Thomas Cheetham of Bletchley Park and Peter Coghlan one of our IBM Hursley Museum curators, which describes how Hollerith and IBM’s punch card technology, dating back to Victorian times, became a vital part of Britain’s codebreaking machine. Alongside an overview of the processes and unique innovations needed to break down message data into usable information, the article also includes insights from some of the machine operators and analysts who worked tirelessly to punch cards, organise data flows and feed the ever-hungry machines. |

|

SSEM Replica — Chris Burton Volunteers continue to inform the public several days per week with a lot of school visits recently. The replica machine has intermittent problems which clear themselves up then return on another day. It is symptomatic of one or more suspect connections or components not yet traced. |

News Round-UpIn Resurrection 99 we mentioned that the LEO Society has published a new edition of LEO Remembered which is reviewed at ccsoc.org/leo0.htm. Copies of the book are available for £8 + P&P by emailing LEOremembered@leo-computers.org.uk with your address and the number of copies wanted. 101010101 Apologies for a major typo in the paper version of Resurrection 99. Missing from the bottom of page 10 are the words “competent engineer would have few problems grappling with this straightforward”. 101010101 At ccsoc/bru10.htm may be found Herbert Bruderer’s latest opus on the rather dismal history of an attempt to create an international computation centre in Europe after the Second World War. 101010101 We have lost a few notable computer people in recent weeks –

101010101 We recently offered our remaining stocks of early editions of Resurrection to readers to fill in gaps in their collections. No charge was made but it was suggested that, if recipients so wished they could make donations to the CCS Restoration Fund. Recipients responded most generously in this way, and we thank them all for that. We are looking into the possibility of offering some later editions. More news when we have some. |

CCS Website InformationThe Society has its own website, which is located at www.computerconservationsociety.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. At www.computerconservationsociety.org/software/software-index.htm, can be found emulators for historic machines together with associated software and related documents all of which may be downloaded. |

Queries and NotesComputer dating in the 1960/70s John Dunn at TNMoC on behalf of a French researcher asks whether anybody has any information about Lowndes Ajax in Croydon and Bedford Computer Services, specifically their involvement in computer dating. By co-incidence, it appears that the latter seems to have been run by the Society’s co-founder the late Tony Sale. Beyond that we have yet to find any information. Please contact johnhouston.dunn@gmail.com if you can help.

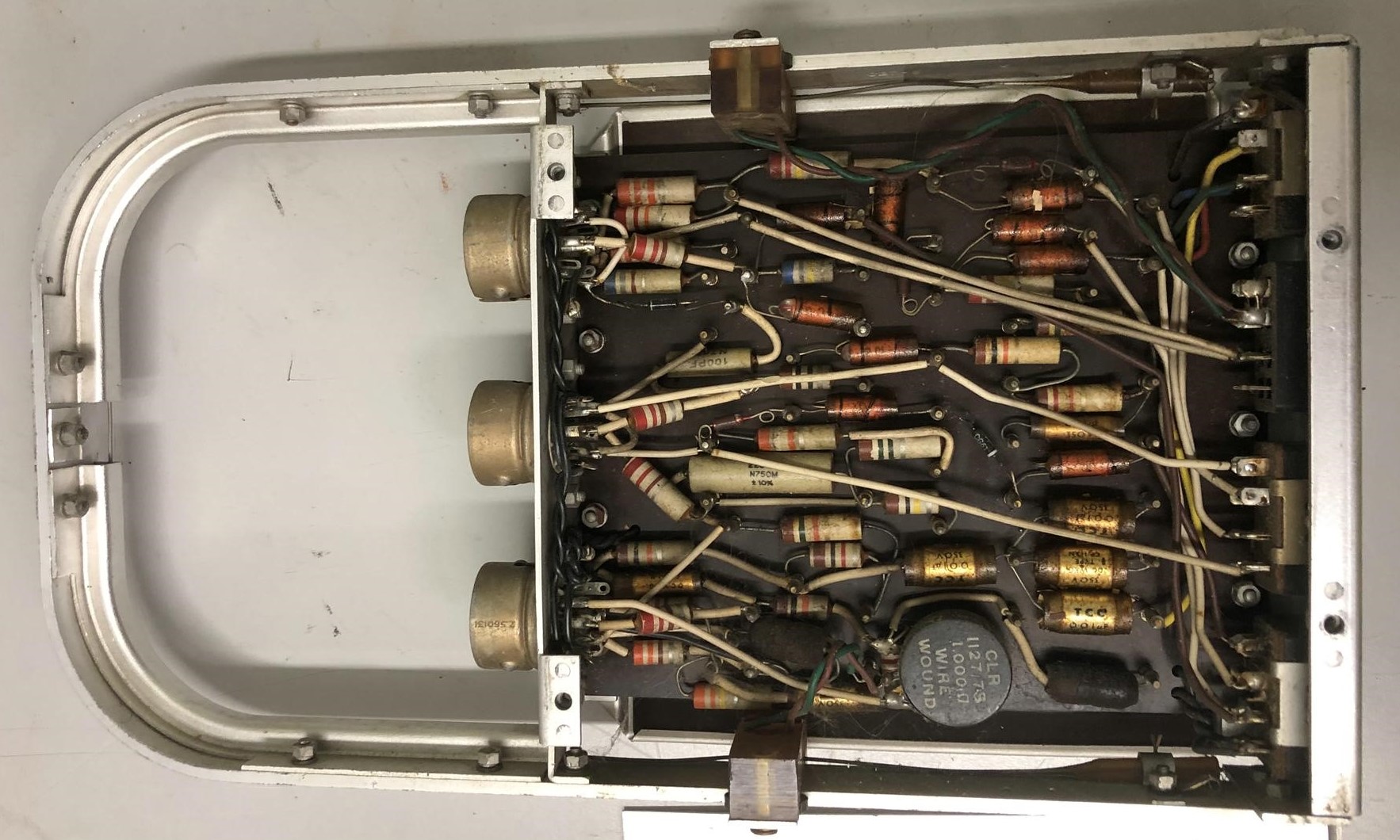

Elliott 40x Circuit Module

The IBM Museum at Hursley is having a tidy-up and has offered a number of non-IBM items to anybody who may be interested. In particular this plug-in module labelled “AN1” which includes a magnetostrictive delay line. It has now found a home at TNMoC but it has also generated much correspondence culminating in Delwyn Holroyd positively identifying it as being from an Elliott 405. If anybody has any more information John Dunn (see above) is the point of contact. A list of the remaining items for disposal can be found at ccsoc.org/ibm0.pdf. Contact Peter Short at peters@slx-online.biz if you can provide a good home for anything. |

Contact details

Readers wishing to contact the Editor may do so by email to

Members who move house or change email address should go to

Queries about all other CCS matters should be addressed to the Secretary, Rachel Burnett at secretary@computerconservationsociety.org, or by post to 80 Broom Park, Teddington, TW11 9RR. |

Chair’s Annual ReportDoron SwadeThe Society, I am pleased to report, continues to thrive and is in rude good health. The London Meetings Programme has featured and hosted nine events since our last AGM, one a month excluding the months of the summer recess. The hybrid format of lectures and presentations, introduced during the pandemic lockdowns, allows virtual as well as live attendance, something we now take for granted but was quite novel a year ago. This has continued with great success. The sustained high attendances are a tribute to Roger Johnson, our London Meetings Secretary, who magically attracts and prevails on eminent, expert and lively speakers to participate. The technical aspects of the hybrid platform are non-trivial and that it functions in the near-seamless way it does is in no small measure due to the enabling team - Bill Barksfield, Delwyn Holroyd, and ready support from Dik Leatherdale, Rod Brown and Dan Hayton together with BCS technical staff. The Society’s quarterly publication, Resurrection, guided and crafted by Dik Leatherdale, its editor, has not missed a beat despite the turbulence and disruption to domestic and world events. The first issue, Volume 1 No. 1, appeared in May 1990. The landmark 100th issue is scheduled for Winter 2022. Resurrection is a unique chronicle of computing history, especially British computer history, and a century not-out is deserved of more than a ripple of polite applause from the stands. The Society provides the reporting structure for 17 projects and related restoration initiatives. The Society is an information hub for regular progress reports on the challenges, progress and successes of a rich variety of restoration activity, as well as being a source of expertise, and material support for these programmes. To the 17 projects in the suite, we have recently added another; the recreation of the Delilah voice encryption device for advanced speech security proposed by Alan Turing in his report of 6th June 1944. John Harper, who led the Bombe rebuild project, has led the Delilah project for some years, and Delilah has been added to the Society’s project portfolio. Delilah is not a computer and its inclusion in the Society’s project portfolio has stimulated ongoing discussion about the scope of the Society’s remit as well as historical connections between cryptanalysis and computing. We welcome this project into the fold. A highlight during the summer recess was the IEEE Milestone event in Manchester in June. Two landmark awards were made for major developments in computer history that took place in Manchester: the Manchester ‘Baby’, and the Atlas Computer. The plaques were unveiled on 21th June, the 74th anniversary of the first program run by the ‘Baby’ – acknowledged as the first successfully run stored program. The Society is pleased to have been amongst the bodies that sponsored the event. All credit and thanks to Simon Lavington, Roland Ibbett, Jim Miles, and Rod Muttram for the substantial work involved in articulating the merits of the Manchester achievements, their cogent advocacy for appropriate recognition, and for the organisational effort in staging so successful an event. Thanks to their efforts the plaques are a permanent testament to a seminal piece of history. The Society is approaching its 33rd year since its founding and we have turned attention to preserving records of the Society’s early history. Under Martin Campbell-Kelly’s guidance we are archiving core documents including publications, operational records of proceedings and of the Society’s governance. Video recordings of the lecture series going back to the Society’s founding have been located, brought together, and are now part of a programme of digitisation for archiving. Huge thanks to Kevin Murrell, Dan Hayton, Dik Leatherdale, and others who have been active in this time-critical programme. We have made substantial progress in proposals to restructure the management of the membership of the Society. A subcommittee has conferred and devised a structure that will allow us to better service the membership by providing improved access to members records in full compliance with Data Protection regulation. The draft proposals have received initial approval from BCS and we will be taking this forward in this year’s programme. Special thanks to Rachel Burnett, our unfailingly industrious committee Secretary, for being the source of invaluable legal advice and guidance, and to Arthur Dransfield, our Treasurer, for the total command of our financial affairs that informs his sure counsel. My predecessor as Chair was David Morriss. Before taking up the Chair I shared with David my concern about the burdens of so active a programme that lay in store. He said that the Committee’s office bearers were so capable, qualified, willing, and creative, that I had no need for concern. After a year working more closely with the Committee, I see the wisdom of David’s reassurances. Thank you to the Committee, one and all. I look forward to what we will do together this coming year. |

Resurrection – 100 Not OutDik LeatherdaleI was going to start this piece with a cricketing metaphor. The batsman (or batter as we must now say) waving his bat, acknowledging the light applause from the club house. The score sheet, showing also the contribution of the opening batsman (whoops). But your chairman has beaten me to it in his annual report. Great minds? Or perhaps it was obvious. I’ve been looking at some of the early editions of Resurrection and the thing that stands out is that we seemed to be mainly interested in physical objects. With some exceptions (Martin Campbell-Kelly’s extract from his ICL history stands out in edition one) we were very much interested in the conservation of computers – not unreasonable given the Society’s name. But over time we broadened the scope of our subject matter. We became interested in software, the history of the industry, and the stories behind individual organisations. This edition is proof of that change. The change which has occurred in these pages reflects the gradual change to the Society itself. While our interest in conserving old computers (and in building replicas of old computers) remains undiminished, the Society has developed into a broadly-based historical group. We have not changed our name. What would be the point? But we have developed our purpose and none the worse for that. One thing which hasn’t changed much is the era which we study. We tend not to have much interest in anything post-1970 or thereabouts. Of course, the early years were a time of rapid change and of a huge variety of approaches to computing, some of which succeeded and some disappeared without trace. But we should, perhaps move on and broaden our scope in a different dimension. But Resurrection would be a poor thing, if I had to write it all myself. So, this evening please raise a glass and toast all those hundreds of authors who have contributed to Resurrection over the years. Raise a glass too to those whose work is so vital behind the scenes – Mandy Bauer and Florence Leroy at BCS who are responsible for ensuring that the paper copy is produced. Chris Burton who produced html versions of more than 50 editions and who managed the website at Manchester University where we used to publish online editions of Resurrection. The late Hamish Carmichael whose proofreading ensured that the text was as error-free as possible. BCS which pays for the printed edition. But most of all, please raise your glass to Nick Enticknap, my predecessor as editor who still serves as proof reader and who protects you all from my poor grip of the conventions of written English. Thanks to all of them from me and I hope from you. |

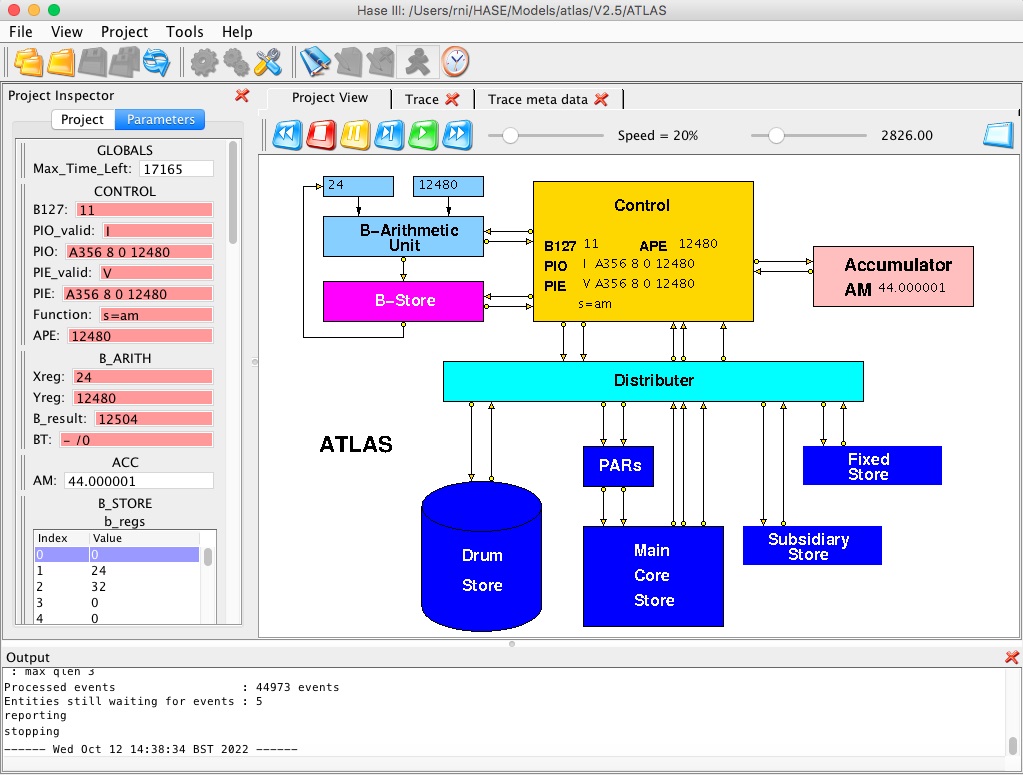

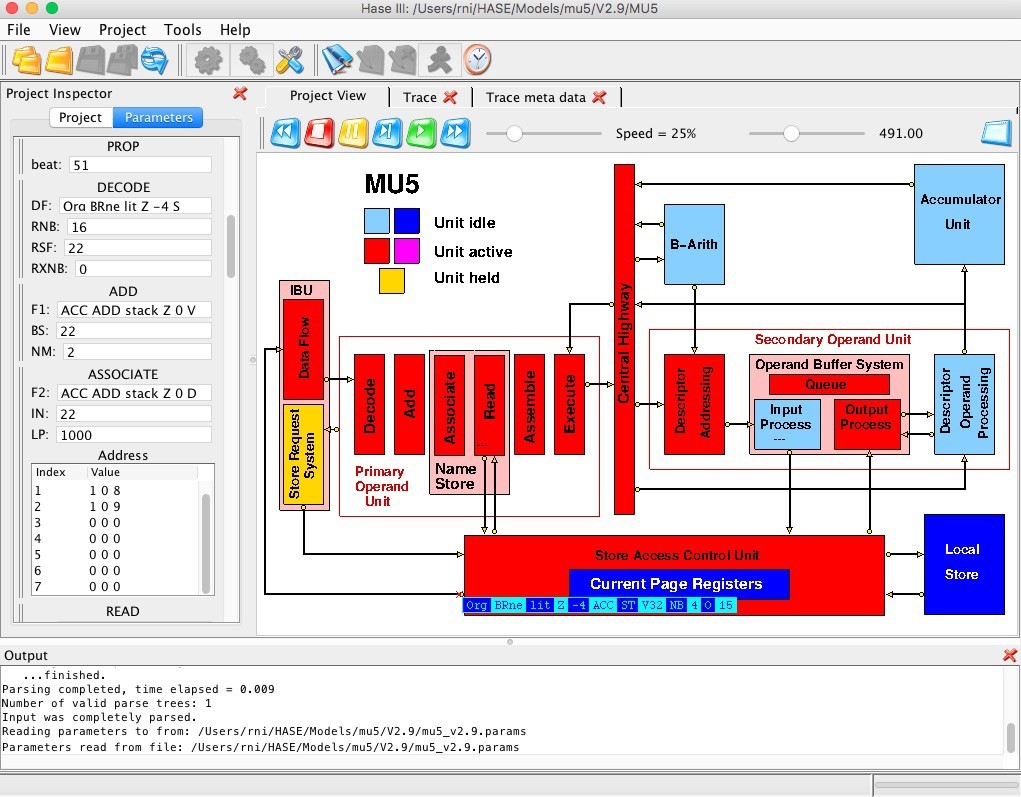

Simulation Modelling of Historical ComputersRoland Ibbett & David DolmanIn the early days of computing, when all computers were physically large devices, students taking computing degree courses could be shown which part of the computer was which and could see flashing display lights showing what was going on inside the machine. With the advent of the microprocessor, this form of human-computer interaction became largely a thing of the past. Around the same time, however, bit-mapped graphical display devices appeared and these could be used to demonstrate the inner workings of computers via animation of on-screen models. HASE, a Hierarchical computer Architecture design and Simulation Environment, was developed at the University of Edinburgh with this purpose in mind. HASE simulation models of a variety of computer architectures and architectural components have been created, some for research investigations of large-scale computing systems and others for use as teaching and learning resources in lectures, for student self-learning or for virtual laboratory experiments. The HASE website ( www.icsa.inf.ed.ac.uk/research/groups/hase/) provides access to the models and to the Java code for HASE itself. Each model has its own supporting webpages describing the system being modelled, as well as the model itself, and from which the source files for each model can be downloaded. These files can be used as inputs to HASE, which has options to load a project, to compile a simulation executable and to run the simulation. Running a simulation produces a trace file which can then be used to animate the on-screen display of the model to show data movements, parameter value updates, state changes, etc. Models of five historically significant computers have been created by one of the authors (RI): Atlas, MU5, the CDC 6600, the Cray-1 and, most recently, the Manchester ‘Baby’ computer. Space precludes describing all of them here, so the interested reader is referred to the HASE website for details of the Cray-1 model. The models are intended to demonstrate the principles of operation of the computers they represent, rather than to be able to execute real programs, so they do not attempt to reproduce all of the more esoteric details of the real machines. They do however try to emulate, as far as possible, the organisation and design features of the corresponding hardware. This has led to further development and enhancement (by DD) of HASE itself. The HASE Simulation Environment

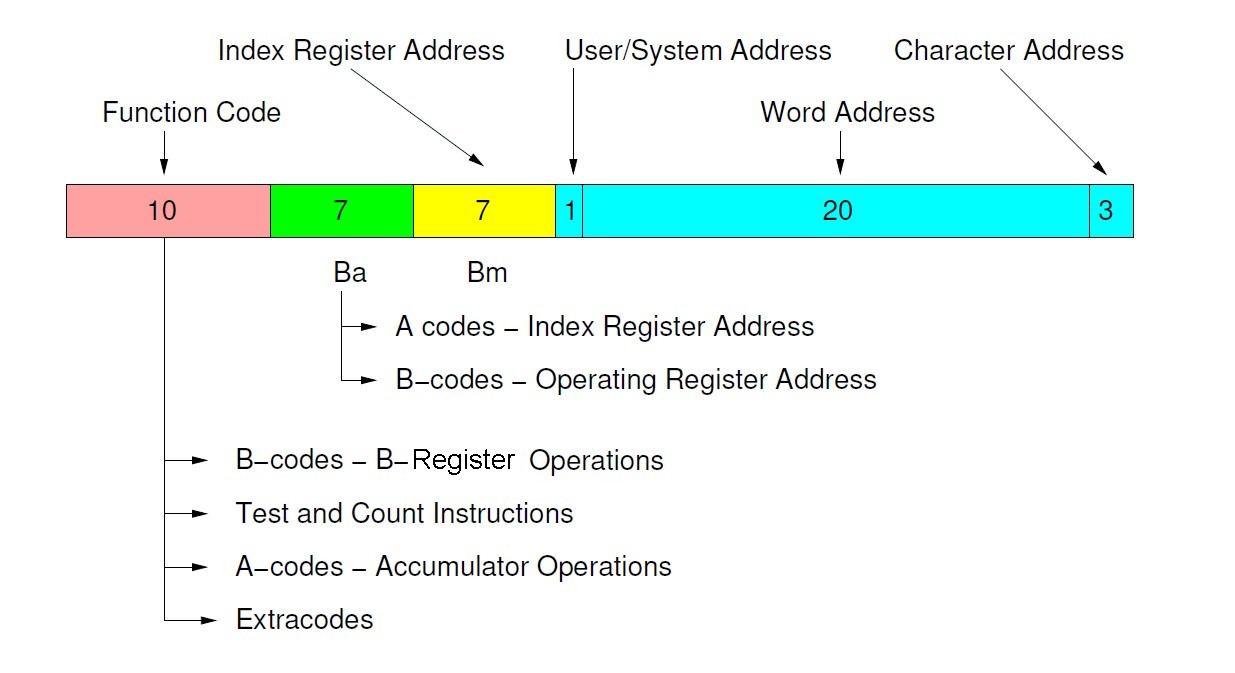

Figure 1 shows a screen image of the HASE Graphical User Interface. The icons in the top row allow the user to load a model, compile it, run the simulation code thus created and to load the trace file produced by running a simulation back into the model for animation. The main Project View pane shows the screen image of the model itself, in this case the Atlas model. The Project Inspector pane displays the values of the parameters defined for the model, while the Output pane displays information being reported back to the user during the various phases of activity involved in a simulation experiment. Each of the blocks in the image represents an entity within the model: HASE uses an Entity Description Language file (project.edl) and an Entity Layout File (project.edf) to specify an architecture. A project.edl file contains five sections: PREAMBLE, PARAMLIB, GLOBALS, ENTITYLIB and STRUCTURE. The PREAMBLE section contains the name of the project, the name of the author(s), the version number and a description. The PARAMLIB (Parameter Library) is used to create the type definitions of parameters used by the project. HASE provides a set of built-in types: bool, char, integer, unsigned integer, long, floating-point, string and range, from which more complex types can be created. These include ARRAY (array parameter type), ENUM (enumerated type parameter) and STRUCT (structure parameter), all of which are similar to those found in C and C++. In addition, HASE provides an ARRAYI type, which allows individual instructions within an array of instructions to be labelled, a LINK type, used when creating instances of entity ports and links between them, and an INSTR type, which can be used to define the instruction set of a processor. The INSTR type allows instructions to be grouped together, a useful feature for decoding purposes. The ENTITYLIB section contains the definitions of the entities. Each entity definition includes a type name, a textual description, a list of states, a list of parameters and a list of ports. The STRUCTURE section defines the architecture by (a) creating instances of the entities and assigning instance names to each of them, (b) creating links between entity ports. The GLOBALS section contains parameters that are accessible by all entities in the model. Using the data in the project.edl file, HASE creates a library of parameter types from which parameter instances (variables) can be created and a library of components from which an architecture can be constructed by linking the defined components together in the desired manner. The screen image of the project is defined by the project.edf file which contains screen position information about the displayed entities, their ports and parameters, and the name of the appropriate bitmap icon for each of an entity’s possible states. The icons are contained in a separate bitmaps subdirectory. The behaviour of the entities is coded in Hase++, a discrete event simulation engine with a programming interface similar to that of Sim++, but implemented using C++ and threads. It includes a set of library routines to provide for process oriented discrete event simulation and a run-time system for multi-threading many objects in parallel and keeping track of simulation time. For each entity there is a separate file (entity.hase) containing its behavioural simulation code. There is also an option to include a global fns file of user defined functions available to all entities. The HASE GUI and the HASE model compiler are written in Java. The model compiler generates C++ code which is then compiled using the C++ compiler native to the system on which HASE is being run. This is linked against the HASE Runtime (written in C++) to produce the simulation executable. HASE is supported on OSX, Windows 7/8/10 and Linux. Modelling Constraints In any simulation modelling system there is a trade-off between accuracy and performance. HASE was designed primarily as a high-level visualisation tool for computer architecture students and therefore simulates systems at register/word level rather than bit level. This inevitably imposes some limitations on the way models can be constructed, e.g. registers are modelled using typed variables and stores are modelled as arrays of typed variables. This means, for example, that fixed-point (integer) variables, floating-point (real) variables and instructions cannot be held in shared arrays. Furthermore, most early computers used what now seem like rather idiosyncratic number formats, so achieving a high degree of verisimilitude in the simulation of floating-point operations would add a significant level of complication. In the MU5 and CDC 6600 models, this problem is avoided by simply representing all numbers as integers. In the Atlas model, advantage has been taken of the way the paging mechanism works to separate, unrealistically of course, fixed and floating-point numbers into separate pages; floating-point arithmetic is implemented using the floating-point operations provided by the underlying hardware of the computer on which the simulation is running, accessed via standard C++ operations. There are also constraints on nomenclature. The elements of a C++ enum, to which the HASE ENUM construct is mapped, can only contain alphanumeric characters and the first character must always be a letter. This impacts on the way the function field of an instruction can be formulated. In the MU5 model, for example, the function ‘ NB =’ is represented as ‘NBld’. Choosing ways to represent the instruction formats, both within the simulation code and on-screen, were among the most significant design decisions in the creation of these models. Atlas The Ferranti Atlas computer resulted from the fourth major computer design and implementation project carried out at the University of Manchester. The first Atlas was inaugurated at the University in December 1962. Designed by Professor Tom Kilburn and a joint University/Ferranti team, it incorporated a number of novel features, the most influential of which was Virtual Memory. IEEE Milestone Plaques recognising this achievement and the “Manchester University ‘Baby’ Computer and its Derivatives” were presented to the University on 21st June 2022. Atlas had a 48-bit instruction word made up of a 10-bit function code, two 7-bit index addresses (Ba and Bm) and a 24-bit store address (Figure 2). Functions that operated on the Accumulator (A-codes) were thus one-address with double B-modification. B-register 0 always returned a value of 0, thus allowing for singly modified or unmodified accesses. The most significant bit of the address field distinguished between user addresses and system addresses, while the three least significant bits were used to address one of eight 6-bit characters within a store word. The B-codes were executed by the B-Arithmetic (add/subtract and logical) Unit and operated on one of the 128 24-bit B-registers specified by the Ba field in the instruction, Bm being used to specify a single modifier. The B-store was made up of 120 words of core store together with eight special purpose flip-flop registers. These included the floating-point accumulator exponent, for example, and the three control registers (program counters) used for user program, extracode and interrupt control. Including these three control registers as B-registers avoided the need for separate control transfer (branch) functions. Extracodes were a set of functions which gave access to some 250 built-in subroutines held in the read-only Fixed Store.

User addresses in Atlas referred to a 1M word virtual address space, and were translated into real addresses through a set of page registers. The real store consisted of a total of 112K words of core and drum storage, combined together through the paging mechanism so as to appear to the user as a one-level store. System addresses referred directly to the Fixed Store, the Subsidiary Store (used as working space by the extracodes and operating system), or the V-store. The latter contained the various registers needed to control the tape decks, input/output devices, paging mechanism, etc., thus avoiding the need for special functions for this purpose. A screen image of the HASE Atlas model is shown in Figure 1. Because integers, reals and instructions cannot be mixed together in a single array, the model takes advantage of the Atlas paging system by modelling the Drum Store as a set of pages and the Core Store as a set of blocks, each made up of 512 words, as in Atlas, but with different blocks/pages containing different types of element. This is unrealistic, of course, but was felt to be an acceptable compromise given the intended use of the model. At the start of a simulation the program and its data are contained in the Drum Store while the Core Store is empty (i.e. contains zeroes). The program code is in page 0 of the Drum, fixed-point integers are in page 2 and floating-point reals in page 3. In the Core Store, Block 0 is modelled as an instruction array, Block 1 as an integer array and Block 2 as a floating-point array. These arrays are themselves loaded from files such as CORE STORE.block0.mem and DRUM STORE.page0.mem when the model is loaded into HASE. The transfers from the Drum Store to the Core Store take place instantaneously “behind the scenes”, rather than being simulated as word-by-word transfers through the Distributer, since this would be extremely tedious to watch in the playback. The Fixed Store and Subsidiary Store are also included as entities in the model, although the Fixed Store is not actually used. The Atlas instruction set is modelled using the HASE INSTR construct. INSTR allows functions to be grouped together both for decoding purposes and to allow each group to be associated with an appropriate addressing STRUCT. In Atlas, the addressing STRUCT contains three integer values, Ba, Bm and address, and is common to all instructions: HASE maps the functions within a function group to a C++ enum, so all functions must start with a letter. The INSTR construct was created during the development of a HASE DLX model and because the DLX instruction set is represented using an assembler code nomenclature, this constraint was not an issue. Functions in the Atlas instruction set are represented numerically, however, so three possible solutions were considered to the quandary that this presented: (a) create an assembler code style representation of each function; (b) simply represent instructions using a STRUCT containing four integers (thus foregoing the built-in decoding facility); (c) prefix each function number by ‘A’ for Accumulator functions, ‘B’ for B register functions and ‘E’ for Extracodes. Option (c) was chosen, so the Accumulator group of functions, for example, is represented within the INSTR definition as (ACC(A314,A315,A320,A321,A322,A346,A356,A362,A363,A374) This offers some limited insight into the purpose of each instruction but it was felt that an on-screen method of conveying the full meaning of each instruction to the user would be valuable. This was achieved by coding, in the global_fns file, a get_text function that returns a text string for each function, e.g. ‘ b=b+s’ for function B104. This is then displayed on the Control icon (see Figure 1). Three versions of the model are available from the website, each containing a different machine code program. The first is designed to demonstrate the operation of each of the instructions implemented in the model, while the second demonstrates the operation of matrix multiplication, one of the most frequently used algorithms in supercomputers. The third is an adaptation of a program that finds values for the lengths of the sides of Pythagorean right triangles. This program uses three Extracodes, B multiply and two print instructions. In the model, the B multiply instruction is executed directly in the B-Arithmetic Unit while the print instructions are implemented by the Control Unit and write values into the Subsidiary Store. These values are printed at the end of the simulation run in the output pane of the HASE GUI. An extension of the model could be to implement at least some of the Extracodes using code held in the Fixed Store, as in the real Atlas. MU5 MU5 was the fifth computer system to be designed and built at the University of Manchester, in succession to Atlas. MU5 introduced a number of new ideas, particularly in terms of its instruction set, which was designed with high-level language compilation in mind, and novel uses for associative stores. The technical aspects of the project have been documented in numerous publications. MU5 became operational in the mid-1970s and ran as a service machine in the Department of Computer Science until 1982. Many of the design ideas developed in MU5 were used in the ICL 2900 series.

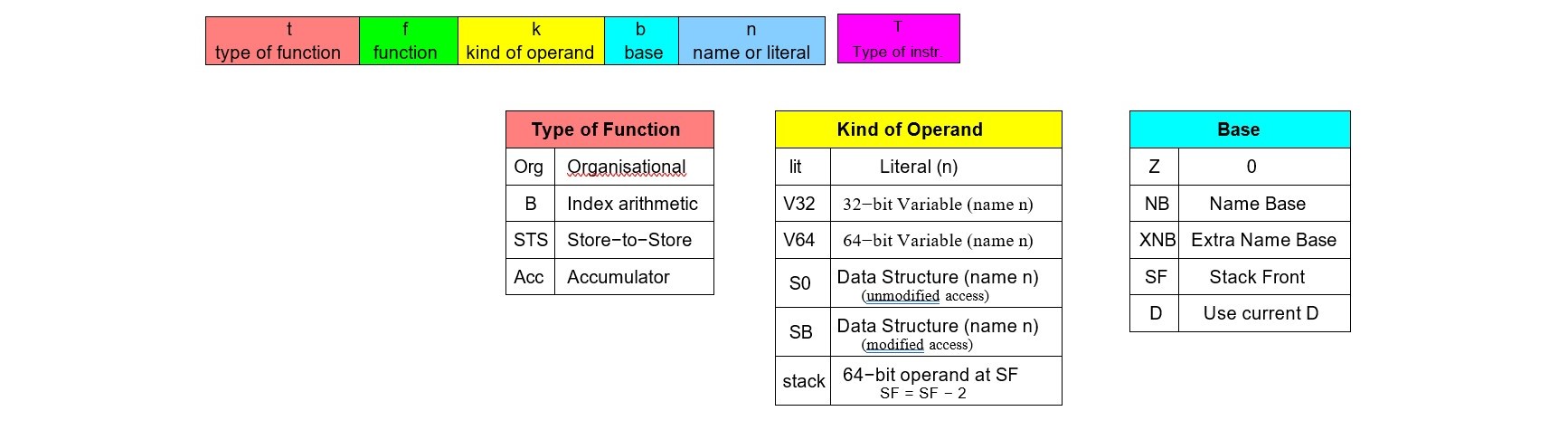

It was clear at the outset of creating the MU5 model that it would not be possible to use the HASE INSTR construct to accurately represent the MU5 instruction set. The instruction set used in the model (Figure 3) therefore follows the spirit of the original, rather than the detail, in particular because the instruction format and length have to be fixed in HASE, rather than both being variable as they were in MU5. So instructions in the model are represented by a STRUCT containing 4 enumerated types, one each for the type of function, the function itself, the kind of operand and the base register, together with an integer used as a name or literal. Using a STRUCT of this complexity had not previously been attempted in HASE and modifications to the EDL parser were needed in order to process it.

Figure 4 shows the HASE MU5 model, the design of which closely follows that of MU5 itself. At the start of a simulation, the address contained in the Control Register (program counter), CO, in the Execute stage of the Primary Operand Unit pipeline (PROP), is sent to the Store Request System within the Instruction Buffer Unit (IBU). The IBU sends this address to the Store Access Control Unit (SAC), which contains a set of Current Page Registers (CPRs). MU5 itself had 32 CPRs, but the HASE model has just four, preloaded such that the first page of Segment 0 (the Name Segment) is mapped to Block 0 of the Local Store, Segment 1 is used for instructions and is mapped to Block 1, while Segment 2 is used for array elements and is mapped to Block 2. Segment 3, mapped to Block 3, is used to contain strings of bytes. Implementing software to manipulate the CPRs is beyond the scope of this model. SAC sends the translated address to the Local Store, which returns the two instructions contained in the addressed memory word back to SAC, which itself sends these instructions to the IBU. The IBU enters the instructions into its buffer registers (organised as a drop-down stack) and sends each instruction in turn to PROP. PROP processes instructions in a six-stage pipeline, at the end of which it has accessed and prepared the appropriate primary operand associated with each instruction and then, depending on the instruction type, either forwards the instruction to the B Arithmetic Unit or the Secondary Operand Unit, or, in the case of an organisational function, executes the instruction itself. Instructions sent to the Secondary Operand Unit are those destined for the Accumulator Unit or others that require a secondary operand accessed via the descriptor mechanism. In the hardware of MU5, various extra function bits were added to instruction registers in the pipeline in order to control the different activities that could occur at each stage. In order to replicate this mechanism in the model and to represent the bits in a visually meaningful way, each instruction register includes an additional character, the T field shown in Figure 3. Three versions of the model are available from the website, each containing a different program. Version 1 contains a program that demonstrates the operation of the MU5 Name Store, Version 2 contains a program that executes a scalar product while Version 3 contains a program that demonstrates the use of the string processing instructions. This program performs three tasks. The first creates the composite string “ Hello world” from individual strings containing its component parts. The second finds the first non-zero digit in the digit string 00083576 and places the ASCII representation of its value in the B register. The third searches for the word ‘ light’ in the sentence ‘Let there be light’; the B register then indicates its position within the sentence. For visualisation purposes, the strings are held in character format in their own block of the Local Store, with each word containing eight characters. These characters are processed using the same simulated hardware as that used for integer values, however, so to get round type checking constraints, the characters are converted to their ASCII integer values and packed into a pair of 32-bit integer words whenever they are read out of memory and, correspondingly, returning integer words are unpacked during a write-to-store operation. Similarly, in the Descriptor Operand Processing Unit, each character is manipulated as an integer value so an extra register is used to display the actual characters. Part 2 of this article will appear in Resurrection 101. |

Data Processing in the 1960s:

Simon Lavington

|

| Computer | first UK delivery | Av. price | Some example deliveries to insurance companies |

| AEI 1010 | 1960 | £170K | None known |

| Elliott/NCR 4100 | 1965 | £60K | None known |

| EMIDEC 1100 | 1961 | £190K | None known |

| EMIDEC 2400 | 1961 | £600K | None known |

| English Electric KDP10 | 1962 | £300K | Commercial Union, 1962 |

| English Electric KDF9 | 1964 | £350K | Sun Life Assurance, 1965 |

| English Electric KDF6 | 1964 | £60K | Equitable Life Assurance, approx.1967 |

| Ferranti Orion 1 | 1963 | £800K | Norwich Union, 1964 |

| Ferranti Orion 2 | 1964 | £800K | Prudential, 1964 |

| ICT 1301 | 1961 | £120K | United Friendly Insurance, ? |

| ICT 1900 series | 1965 | various | Reliance Mutual, 1966 |

| Leo LEO III | 1962 | £250K | Phoenix Insurance Co, 1970 |

| Table 1. British computers with transistor/ferrite core technologies available in the early 1960s and considered suitable for business data processing applications. (For the interpretation of columns three and four, refer to notes in the text). |

In April 1960 the Commercial Union finally chose an English Electric KDP10 computer system, at a cost of £297,000 (or £87,000 per annum if rented). It was “the cheapest of the systems considered”. This was in fact the very first installation of a KDP10; it was planned to come into operation in 1962 in a specially-build Computer Centre in Exeter.

4.2. The English Electric KDP10.

The KDP10 computer was based on the American RCA501 machine, which had first been announced in 1958 and which, by 1960, had been purchased by two American insurance companies amongst several other organisations. The KDP10 used printed circuits, transistors and a ferrite core store. The core store was available in increments of 16K character locations, up to a maximum of 256K characters. Various types of peripheral equipment were available. 7-track paper tape was standard, the paper tape reader operating at 1,000 characters/sec. The paper tape punch operated at either 100 or 300 characters/sec. The Card Transcriber read in 80-column cards at up to 400 per minute and wrote the information to magnetic tape. There was a 600 lines/minute Lineprinter. Faster off-line Xeronic printers were available – see below.

What other characteristics might have made the KDP10 stand out amongst the six prospective candidate computers assessed by the CU?

Firstly, the whole KDP10 system design emphasized magnetic tape as the main (long-term) operational medium. Up to 62 tape decks could be directly addressed. Magnetic tape transfer rates were: 16K or 33 K characters/sec. when writing; 33K characters/sec. when reading. (By 1964, English Electric had increased the transfer rate to 66 K characters/sec.) There were 16 channels across the tape, which was ¾ inch wide. Duplicate (i.e. dual) recording was used, meaning that a single lost bit could be tolerated.

The second attractive characteristic of the KDP10 for business applications was that it was a true variable item-length system. Use of control symbols and the ability to address each character location individually permitted the length of any item in any message to be in strict accordance with that item’s actual character count. This allowed for total variability of item and message length. Decimal arithmetic as well as binary arithmetic was provided in hardware.

The third attractive characteristic of the KDP10 was its error-detection facilities. Arithmetical operations were checked by repeat operations using the complements of the operands. Information-transfers were checked by parity. Breakpoints were available to aid program debugging. Rollback was provided upon error-detection.

More technical details of the English Electric KDP10 computer, and a fuller version of this article will be found on the OCH website.

There remain two additional areas of special KDP10 equipment relevant to the Commercial Union’s installation: (a) communications equipment connecting remote branches to Exeter; (b) the high-speed printing of results. Details of these are now given.

5. Special equipment for the CU’s Exeter installation.

5.1. Data transmission: the Swift system.

In Britain in 1959, telex was the only practical data-transmission facility available nationally. Accordingly, the Commercial Union performed experiments in which 5-track paper tape was produced remotely, fed into a CU branch’s telex machine and the data sent by GPO line to a central telex receiving unit at Exeter. Here, 5-track tape was produced and fed to an ICT 1036 tape-to-card converter for test purposes. The 1959 experiments revealed two disadvantages of telex transmission: (i) the data transmission-rate, 50 baud, was slow; (ii) the telex system had no parity bit or other method for error-detection.

By the late 1950s 7-track paper tape systems were coming into use. These clearly allowed a richer character set (64 instead of 32 possibilities) but, importantly, they included a parity bit for error-detection. Accordingly in 1961 experiments were conducted between the Commercial Union’s Brighton branch office and the Exeter Mechanisation Centre, using prototype PT750 transmission equipment designed by Automatic Telephone and Electric Co. A typist in Brighton prepared 7-track paper tape on a Friden Flexowriter. This tape was fed via a Ferranti TR5 paper tape reader into the AT&E equipment and transmitted using phase modulation at 437 baud (or about 62 characters/second) to Exeter. These were the days before Subscriber Trunk Dialling so transmission over the public telephone network was arranged to be in prolonged uninterrupted (PUT) mode. Transmission over the public network did have some oddities. The Post Office ‘pips’ could under certain circumstances be interpreted as meaningful data so the Post Office was persuaded to drop the use of pips for CU transmissions. Experiments during 1961 indicated that the overall expected transmission error rate was under 1.5 errors in a million transmitted characters. Accuracies were acceptable enough for the CU to use the equipment in October 1962 for demonstration transmissions between CU offices in London and New York using the Telstar satellite. Successful live query-answering transmissions were also used between Paris and Exeter in the same month, at an international conference.

As a result of these and the Brighton/Exeter experiments, it was decided to equip all of the Commercial Union’s approximately 40 main branch offices with a data-communication set-up consisting of three sub-units: (i) a Friden Flexowriter, (ii) a Ferranti TR5 paper tape reader and (iii) an AT&E PT750 transmitter for sending data over the telephone network to the CU’s new Computer Centre at Exeter. Connection between Exeter and all of the CU’s 40 main branches was done office-by-office, taking many months following the KDP10 computer’s installation. The first branch connection started with Dundee in 1964. Training branch staff and equipping branches was achieved “at the rate of one or two per month”.

Eventually this interconnection network, which became known as the Swift system, created a total traffic into Exeter of about 1.5 million characters per day over a period of two hours using four receivers.

At Exeter, data received from branches was first automatically punched onto 7-track paper tape. The reels of tape were then fed into the KDP10 computer via three fast (1,000 characters/second) paper tape readers at a pre-arranged time in the central computer’s daily operating schedule. This ensured that the KDP10 was not kept waiting for late-arriving data from a particular CU branch office. Of course, this was before the days when on-line communications equipment became the norm. However, as was observed in a contemporary comment on the Commercial Union’s experiments, IBM in 1962 was “offering real-time channels which incorporated buffers for assembling messages at line speeds and transferring these into the main store, after checking, at memory speeds”. The high cost of using such equipment to service all 40 of the Commercial Union’s branches at speed is not mentioned.

5.2. Xeronic high-speed off-line printing.

|

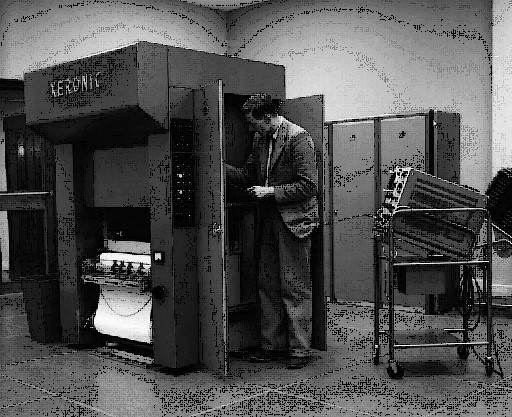

| Figure 1: One of the two Xeronic high-speed printers installed in Concorde House, Exeter. |

The Commercial Union issued several types of Insurance documents – policies, claims forms, etc. – each with a particular layout. Furthermore, the required volume of document production was huge. For example, renewal documents for some 30,000 policies were required to be printed each day. Such volumes were well beyond the capacity of conventional computer equipment of the 1950s.

Accordingly, the CU spent a year in discussions with Rank Xerox Ltd. on the provision of novel printing facilities. The result was the Xeronic high-speed printer, whose first public demonstration in 1960 used CU documents as the examples.

A Xeronic printer was rated at a speed of 3,000 lines/minute. Two images were simultaneously projected onto the paper: (i) a standard form layout; (ii) the particular characters to be inserted on this form. Since the printer could switch rapidly from one form-image to another, documents could be printed in the order in which they were to be despatched, ready and addressed for use with window envelopes. Each Xeronic printer used a 26” wide roll of paper and could print documents at the rate of 40 ft of paper a minute. Each roll of paper ran for about 50 minutes before a re-load was needed. Between 80 and 90 renewal forms could be printed per minute, equivalent to about 4,500 per hour. A Xeronic printer is shown in Figure 1.

At Exeter, the CU installed two Xeronic printers, working off-line from data provided on magnetic tape by the KDP10 processor. One magnetic tape deck could feed both printers. Each printer had to be stopped for about five minutes every hour for off-loading the printed output and inserting a new roll of blank paper.

6. The Exeter Computer Centre.

|

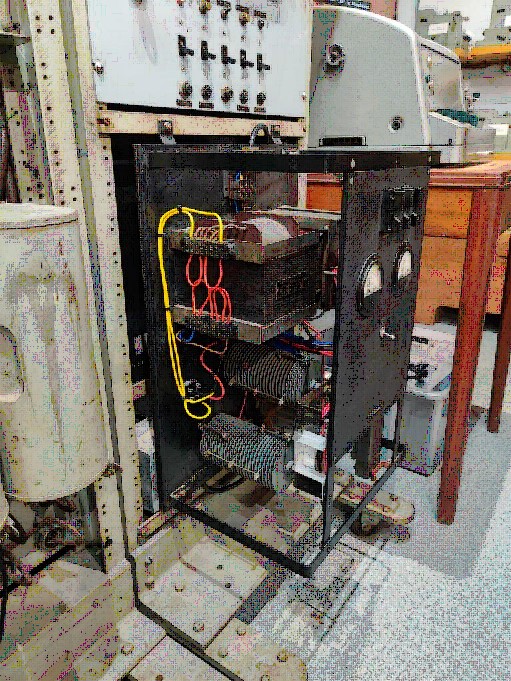

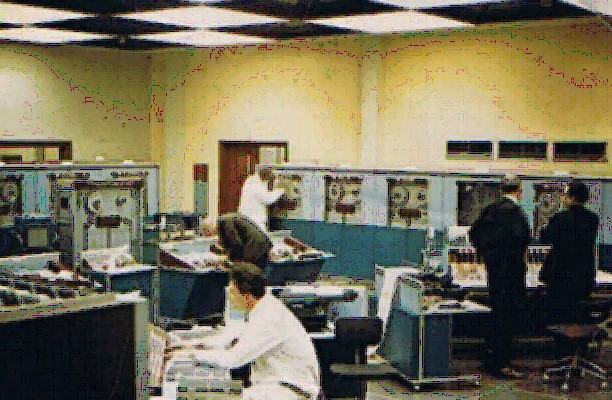

| Figure 2: The twin-processor KDF8 installation in Concorde House, during the daily maintenance period. The three men on the left are English Electric engineers. The two people standing at the console are CU staff. On the left is Dave Barwell; on the right is Alan Mazonovich. |

A new four-storey, 36,000 sq. ft floor area, custom-designed building called Concorde House was opened at the end of 1961. The English Electric KDP10 computer was delivered in January 1962. The machine was nicknamed CUTIE, standing for Commercial Union Totally Integrated Electronics, with the implication that from henceforth most of the company’s business would be ‘integrated’ within a single system. Amongst the special facilities installed at Concorde House were the Swift data-transmission equipment and the Xeronic high-speed bulk printers.

At first, CU ‘programmers’ were all male. They produced flowcharts on large sheets, on which each box was a (group of) pseudo-instructions. Then women ‘coders’ produced the actual KDP10 coding sheets based on the flowcharts. David Olphin believes that “men were felt to be creative and women accurate!”.

The overall vision was that the new Exeter Computer Centre would absorb from Branch Offices the issuin of policies and endorsements, the maintenance of records, the renewal processes and all work on accounts and statistics. It would eventually also provide for internal Commercial Union administrative activity such as payroll, budget forecasting, name and address indexes, etc.

To quote Frank Knight, “The effect of using a computer in the manner described is to limit the work of the clerical staff of the company to what is of first importance: public relations service. The branch staffs are able to concentrate on interviews and correspondence concerned with arrangement of policies, settlement of claims, disposal of queries, and provision of decisions and advice. When the results of this work are sent as data to the Computer Centre, the latter becomes fully responsible for checking, issue of documents, all functional running – the whole being completely self-checking and guaranteed to a high degree of accuracy”.

However, the actual coverage provided by Exeter was more modest and evolved more slowly than had been hoped. Peter Monk remembers that initially the Exeter system “covered the policy records, financial records etc for Personal and Domestic General Insurance. This included matters such as house content, house property, private and commercial vehicle insurance. It famously produced statistics, the life blood of the underwriting world that had been weak previously. It didn’t incorporate claims, and it didn’t extend to other classes of insurance such as the CU’s Life business”. The Life side was not fully computerised until much later.

The target in 1962 was to process five million policies per week. Each policy record averaged 350 characters. The eventual daily computing load was to handle 1,000 new policies, 5,000 policy alterations and 28,000 policies affected by cash payments. Data would be arriving daily from several tens of branch offices or departments.

In 1962 the plan called for two main computer runs per day. In brief:

| Run 1 | Input data from branches via the SWIFT system, check it and sort it. Print all new policies and memoranda ready for despatch. |

| Run 2 | Update main file (held on magnetic tape), using the data from Run 1. Update all statistics. Print required endorsements, renewal documents, accounts for agents, etc. |

By late 1965 the above scheme was extended by the introduction of Two-Shift working. During 1965, twin KDF8 processors replaced the KDP10 in Concorde House. Figure 2 shows the Commercial Union’s upgraded computer room. Each KDF8 had 131K characters of core store and 13 tape decks per machine.

7. What came next?

Work at Exeter continued apace until March 1968, when the KDF8s and the Commercial Union’s entire computing equipment were moved from Exeter to Whyteleafe in Surrey, to spacious converted factory premises ten miles south of central London. The CU sold Concorde House in Exeter, the building eventually being converted to house 28 one and two-bedroom apartments.

Why did the CU’s Computer Centre move? Surrey was clearly closer to the company’s London headquarters but wages in Surrey were higher than those in Devon. Space was one reason for the move. At Whyteleafe there was plenty of room for expansion. Indeed, at Whyteleafe a new computer system was installed alongside the KDF8s (see below), with a period of co-existence before the new system took over.

By the end of 1968 all the CU’s core business had been transferred to the two KDF8s. To supplement the two KDF8s, an ICL 4/40 was rented. All this equipment was installed at Whyteleafe. During 1969 a number of Head Office jobs (e.g. investments, share register) were converted to another ICL 4/40, installed in Head Office in London.

In due course the CU switched from the KDF8s to English Electric Leo Marconi System 4 computers, which were based on the American RCA Spectra 70 range and were IBM 360 compatible. Programming for the KDF8 had been mainly in KDF8 Octal machine code until the System 4s arrived and Usercode (IBM Assembler) was adopted. Eventually the CU moved to COBOL and the IBM 360/370 range of mainframe computers.

In 1970 it was decided to hire two ICL System 4/70 configurations. These would provide capacity to undertake all CU core business and all Head Office jobs and provide facilities for the later use of remote data terminals. The two KDF8s and the two Xeronic printers, by now showing their age, were offered free of charge to educational institutions and the 4/40 at Whyteleafe was returned to ICL.

The Commercial Union itself continued to flourish. Its Whyteleafe Computer Centre was eventually closed down at the end of 2001, following the CU’s mergers with the General Accident Company in 1998 and then with the Norwich Union – the resulting entity changing its name to Aviva in 2002. All computing activity was subsequently centralised in Norwich.

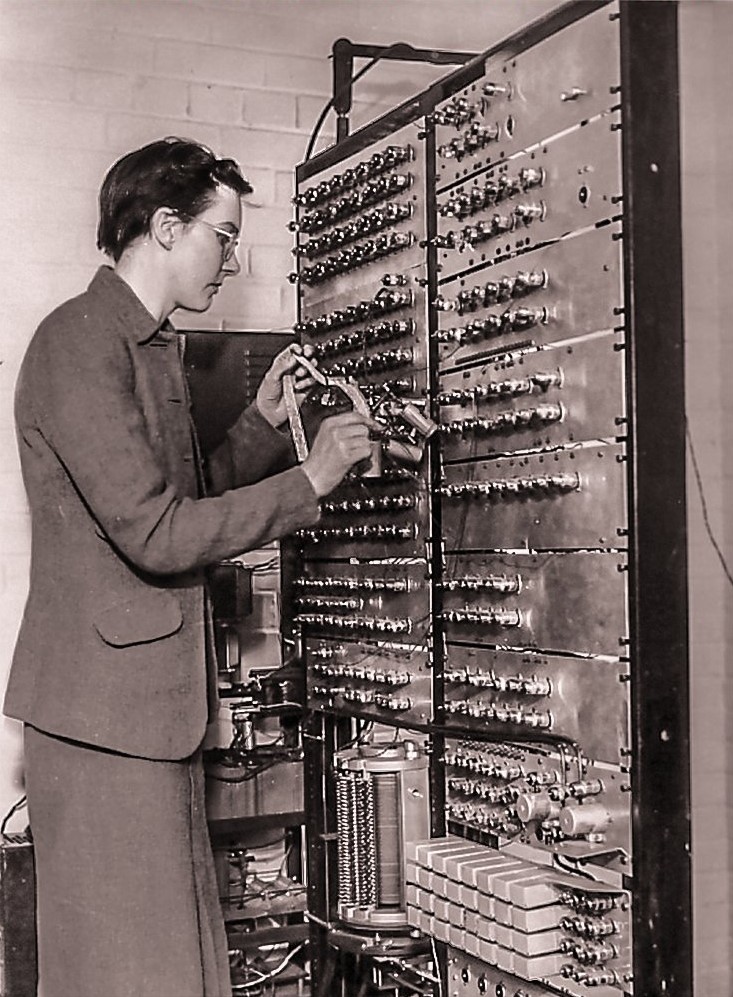

Obituary – Kathleen BoothRoger Johnson

Kathleen Hylda Valerie Booth (née Britten) who died on September 29th 2022 at the age of 100 years was one of the last of the very first generation of UK computer pioneers. At the end of the war she joined Birkbeck College, London as a research assistant in a small group led by Andrew Donald Booth automating crystallographers’ calculations. Starting in late 1945, the Booths began building their Automatic Relay Calculator (ARC). In 1946 Andrew Booth spent six weeks with John von Neumann at Princeton and in 1947 he returned with Kathleen Britten for a six month visit again based at Princeton. This led to the redesigning of ARC1 with a “von Neumann” architecture (ARC2). In 1949 work began on the Simple Electronic Computer which led to the best known All-Purpose Electronic Computer (APEC). The construction of these pioneering machines led to a number of fundamental contributions to computing technology. Firstly, ARC2 needed a memory which was provided by a drum, the world’s first rotating computer storage device. Secondly, the computers needed a fast multiplier in the form of the Booth multiplier which appears today on billions of chips every year. In 1950 the circuits from APEC were traded with BTM who used them in the ICT 1200, the UK’s first mass selling computer with over 100 sold across the world. In 1962, following a disagreement at Birkbeck, they emigrated to Canada. They remained active in both academic and practical computing. Andrew died in 2009. They are survived by their daughter and son, Amanda and Ian, to whom we send our condolences. |

Review – The History of Computing: A Very Short IntroductionSimon LavingtonThis book is a very welcome addition to Oxford University Press’s celebrated Very Short Introduction series, which now has some 500 titles. As one would expect from Doron Swade, this book is not a traditional abacus-to-smartphone history. This book is also a thoughtful discussion of what properly constitutes the history of computing as a field of study. When did it begin and who writes it? What are the boundaries? Swade locates the beginning of the study of the history of computing in the 1970s with Brian Randell’s Origins of Digital Computers (1973) and the 1979 launch of Annals of the History of Computing. In the early years the history of computing tended to be written by practitioners (such as Randell) and later by historians of science, technology and business. More recently social historians have taken up the subject.

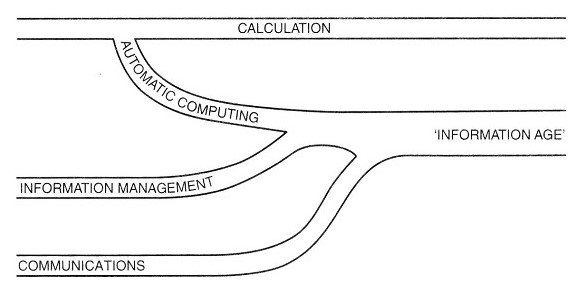

Doron answers the question “what should be studied?” with his well-known “river diagram”. In this view the founding tributary of today’s “information age” is calculation – from finger-counting to the electronic pocket calculator. Next, automatic computing started with Babbage in the 1820s but did not fully blossom until the electromechanical machines of the 1930s and 1940s. Information management began with record-keeping in the prehistoric era but flowered with office machinery, especially punched-card machinery in the 1890s. The newest tributary is communication – from fire-beacons to the internet. The book follows this discussion with chapters on calculation, automatic computation, and electronic computing. All of these have a commendably internationalist perspective. A chapter on the computer boom takes us through the familiar territory of the rise and rise of IBM, real time computing applications, and the ascent of System/360. Next comes the revolutionary era of semiconductors, Moore’s law, mini- and micro-computers, and the rise of Microsoft, Apple, and the personal computer. In the closing chapter Swade reflects on the question: What should be the new master narrative of computing? We have long since moved on from portraying the computer as a “scientific tool”, that dominated the early studies. The computer as an “information machine” served well for practically a generation, but now fails to capture much of the 21st century computer landscape in the era of the social internet and regulatory conundrums over issues such as fake news. This is the challenge for the coming generation of historians. |