| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Journal of the Computer Conservation Society

ISSN 0958-7403

|

Number 93 |

Spring 2021 |

Contents

| Society Activity | |

| News Round-up | |

| The Cray Y-MP EL : An Overview | Clive England |

| Bryant disc file at BMC Service, Cowley, Oxford | John Harper |

| CCS at the Movies – December 2020 | Dan Hayton |

| Book Review : The IT Girl: Ann Moffat | Frank Land |

| Obituary: George Davis | Roger Johnson |

| Obituary: Arthur Rowles | Doron Swade |

| 50 Years Ago | Dik Leatherdale |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

Society Activity

|

Analytical Engine — Doron Swade With the first-pass inspection of the manuscript archive complete, attention has turned to analysis and interpretation and organising the findings to aid navigation. Babbage shed versions of the design as it developed in the form of ‘Plans’ – large ‘systems drawings’ which serve as developmental staging posts – the main ones of which number Plan 1 through to Plan 28. The overall approach to analysing the accumulated data is that of a timeline that groups all material, from wherever in the archive (drawings, Notebooks, Notations), to each of the landmark Plans. The significance of the design advances for each Plan is identified as each is reviewed and evaluated, whether, for example, a new Plan involves a major design reset or only incremental change. From this, the first fruits of the extensive study of the sources are now emerging and the overall developmental arc is now easier to identify. A major initial finding is that the designs are less disjointed than thought and there is more continuity in the inventive trajectory than we feared was the case, or that scholarship to date had indicated. As an example of a more specific finding: it is not until Plan 27 that there is the first evidence of user-level conditional operation. While the Analytical Engine is routinely portrayed as incorporating, from the start, defining features of a modern computer conditional operation included, this featur e appears fairly late in the day and is barely mentioned again. How significant to Babbage was conditional operation as a defining feature is now an enticing open question. Tim Robinson has started writing up findings following the review of the complete Babbage manuscript archive. The initial work is in the form of an overview centred on each of the Plans i.e. the large systems drawings that Babbage shed during the evolution of the designs. The intention was to put to one side further detailed work for the moment at least, to take stock and to document broad-stroke findings and new insights. Excavating further the hardly-known Plan 30 (there is a Plan 28a but seemingly no Plan 29) proved irresistible both for inherent interest and for completeness. Babbage restarted work on the AE designs in June 1857 after a break of almost a decade and referred to the machine as ‘Analytical Engine 30’. Tim reports that the hardware changes introduced for Plan 30 are dramatic. One remarkable feature is the extension of the store to 1000 registers, and most intriguingly various methods of mechanically addressing the store contents. The broad-stroke writing has been paused temporarily while this rich seam is explored. It is not expected to take long and we look forward do the resumption of the interpretative account. |

|

Elliott 803, 903 & 920M — Terry Froggatt Unsurprisingly, I have nothing to report right now about the 803 and 903 at TNMoC. Due to the Covid restrictions, we’ve not yet even managed to reconnect the 903 engineering display panel. Andrew Herbert is still working on the development of a Raspberry Pi based paper tape system interface for the RAA/TNMoC 920M at home (when not interrupted by his other TNMoC duties). He has recently changed from using TXS0108E bidirectional level convertors to using SN74LVC245A transceivers. The Raspberry Pi could outrun the former, but with the transceivers all is fine. Meanwhile, I have started to understand some of the wiring of the eBay 920M at home. For example, by running a simple counting program, I now know which pins on the engineering display connector and the extra store connector carry the 18 accumulator bits. Ideally, I’d like to understand the control panel connector pins, so that we can connect a panel, but these pins are mainly inputs to the 920M so they are likely to be more difficult to trace. Both 920Ms are having problems with their “power good” signals, which we are currently investigating. I’m sad to report that Don Hunter died in January, very peacefully in his own room at home. He had just passed his 93rd birthday. His personal computers were a 16Kword Elliott 903 from Harwell (now with Andrew Herbert) and an 8Kword Elliott 903 from BCRA (now on display at the Cambridge museum). He used his 903s in an attempt to crack the Beale cyphers. He would often ring me up on a Sunday lunchtime, asking me to diagnose a 903 over the ’phone. Eventually it dawned on me that he was hoping to demonstrate it to Sunday lunchtime guests. I’ll miss his cryptic emails. |

|

IBM Museum — Peter Short The Hursley site remains closed. However there still seem to be quite a few activities we can do from home. Curator Peter Short has put together the long-planned virtual tour of the museum, one of those things you always mean to get around to but never have the time. We were fortunate to have a set of jpeg images from an earlier 3D scan of 7 of the rooms. The tour is on the website at slx-online.biz/hursley/views.asp. |

|

ICT/ICL 1900 — Delwyn Holroyd, Brian Spoor, Bill Gallagher ICL1904S Emulator We need to thank David Wilcox (our colleague in Canada) for his contribution to solving the problems with the Bryant 2/2A/2B (2804/2805/2806) fixed disc emulation. David has now been working with our team for the last few months and is now active on the PF56 sub-project with Bill. Subsequent to writing the last report, we have had further information on the use of the Bryant FDS units from several people. They appear to have been more common than we first thought. At least two of the nationalised gas boards used them (one of them had four installed). Brian’s experience in the Health Service makes him wonder whether all Gas Boards were equipped with similar hardware, running the same software. Thank you for the interesting information received. The 7210 IPB (Inter Processor Buffer) has made a few steps forward, however the status values are proving tricky to get right. The 7210 IPB will allow communication between 1900 machines, and a working IPB is an almost ideal starting point to implement the DDE which connects the 1900 machines to the PF56, either as the 2812 disc controllers or the 7903 communications controller. There have been support requests from new users of both the 1904S emulator and G3 Executive Emulator which Brian has tried to answer despite not having used the latter himself for several years in favour of the hardware emulators. That is not meant to be dismissive of G3EE. Without it we would never have got the 1904S and other emulators off the ground. PF56 Emulator Particular thanks are due to David Wilcox, who has provided significant insights into both the 1900 executive and importantly the PF56 DCPs. He has determined how the 1900 executive tracing macros were used and has developed tracing macros that will certainly be of value when the 1904S starts to talk to the PF56. The PF56 emulation project has made some important steps forward. A number of improvements to the emulator have been made regarding the ability to observe its progress, this is going to be useful when we start trying to connect it to the 1904S. The tracing facilities can now be controlled interactively and a bug regarding controlling the Application Modules (I/O controllers) has been fixed, and a number of problems with the CPU’s integrated devices (Typewriter and Paper tape reader) have been fixed. The PF56 cross-assembler (#SPAA) that runs on the 1900 machine has had a few small bugs (introduced by Brian’s typing skills) fixed. A dis-assembler for the PF56 has been created and is allowing much improved understanding of the PF56 programs. General understanding of how DCPs (EZDB in particular, but also DCPs in general) work has improved greatly, with availability of time to work on it. We have gained further understanding of the EZ5A DCP in an attempt to fill in the gaps in the available documentation. This has led to trying to reverse engineer both the EZ5A DCP and its management tools (#SS80, #SS81) to obtain as much insight as possible into what is expected of the DDE module and the relevant 1900 executives. – Another undocumented PERI additive mode discovered and documented. Again we are asking anyone who might have material relevant to the 7903/2812/PF56 to have a look at what they might have (books, notes, paper or magnetic tapes), even knowledge that they could put on paper or email – it has all proven valuable in the past. (Logic diagrams for the D.D.E.A.M. would be a breakthrough!) Anything regarding the communications protocols CO[0123] would also be a much appreciated addition to what is currently a sparse collection of relevant documents (almost none, just what is in DC&I and gleaned from exec listings, nothing at all on CO2 or CO3.) As usual, we are prepared to cover reasonable photocopying/postage costs and to return any documents (if requested) after copying and scanning for future preservation. |

|

EDSAC — Andrew Herbert Tony Abbey visited TNMoC for essential EDSAC maintenance. EDSAC was turned on the first time since late December 2020. It started normally and commissioning continued from where it had been left off. We are gradually working through a suite of test programmes check out functions one-by-one and are presently at test number 7. Bill Purvis has made some improvements to the database that is intended as the long term indexed archive of technical material about the machine. Simon Porter has continued his campaign of checking chassis against schematics and rewiring and remaking solder joints as necessary to enhance reliability and maintainability. |

|

Turing Bombe — John Harper There have been only a few visits to maintain the machine recently and these have been ‘by appointment’ because the museum is officially closed. The weather has been very erratic recently with large swings of temperature and this has given us some concern about the conditions in the Bombe gallery. The machine has a great deal of bare metal and would suffer if there was corrosion. The heating has been left on so there should not be a problem, but we would be happier if we knew exactly what was going on. We have now installed a temperature and humidity logger. We did not expect there to be any problem with the conditions in the Bombe gallery, but it is reassuring to know now we have the data. I am proposing to make a small demonstration item called a Drunken Drive. On one version of a four-wheel Bombe more time is needed to carry out a further 26 tests in the time that the fast wheels are on a given segment. To keep the time to a maximum, this device holds the fast drums almost stationary for as long as possible and then moves the drum rapidly to the next segment. There would be 36 of these devices on a machine but I am proposing just one, to run at slow speed so that visitors can see the action. We have the drawings needed in AutoCAD format because a few of the parts are more easily made by CNC, also multiple copies can be made. We already have people saying that they will help but I need more before we commit to proceeding. |

|

IBM 360/20 Project A warm welcome to our new colleagues and their IBM 360/20 (see Resurrection 86). |

|

Software — David Holdsworth Webservers Both Andrew Herbert and Peter Onion (TNMoC) have successfully cloned my webserver and implemented their own emulation systems within it. I think this gives good hope for continuation beyond my mortality. I have been investigating the current state of Java servlets. Although the servlet API is defined as within the javax library it is not included in the Java runtime system, unlike the SSL code which is also in the javax section of the library. The current servlet API is a super-set of the one that I use, and all my servlets compile with the new API. However, my code to implement the API is rejected on the grounds that it does not implement all the methods. I regard it as a defect in the Java implementation that missing methods are not filled in with Unsupported Operation Exception accompanied by a compile-time warning. The extension to the API is very voluminous, but I have it in mind to incorporate new methods. Elliott Algol Andrew Herbert now has a new 903 emulator written in C and it is currently operating with my webserver (DHZipServ). Peter Onion has achieved a similar feat for Elliott 803. Leo III Although there has been little change in this area, I am using it as the exemplar in my Royal Society lecture on 16th March, as my previous unfamiliarity with Leo III gives me the perspective of someone several years hence. Compiler Compiler In the previous edition we reported (twice) that IMP, the Edinburgh University version of Atlas Autocode, may have been implemented using Compiler Compiler. David Rees and Roland Ibbett have kindly been in touch to refute this speculation. We are happy to accept their correction. Dik Leatherdale’s experiments with CC continue and have revealed the use of a circular, rather than an arithmetic, shift in compiled multiply or divide instructions which gives the wrong result if the result is negative. Although our version is taken from a 1962 source listing, he was surprised to discover the error still present in a newly-discovered 1967 listing. A one-line change in the source code happily clears the bug and, through the magic of the Compiler Compiler, it is only necessary to recompil a single subroutine to produce a new, clean version. |

News Round-Up

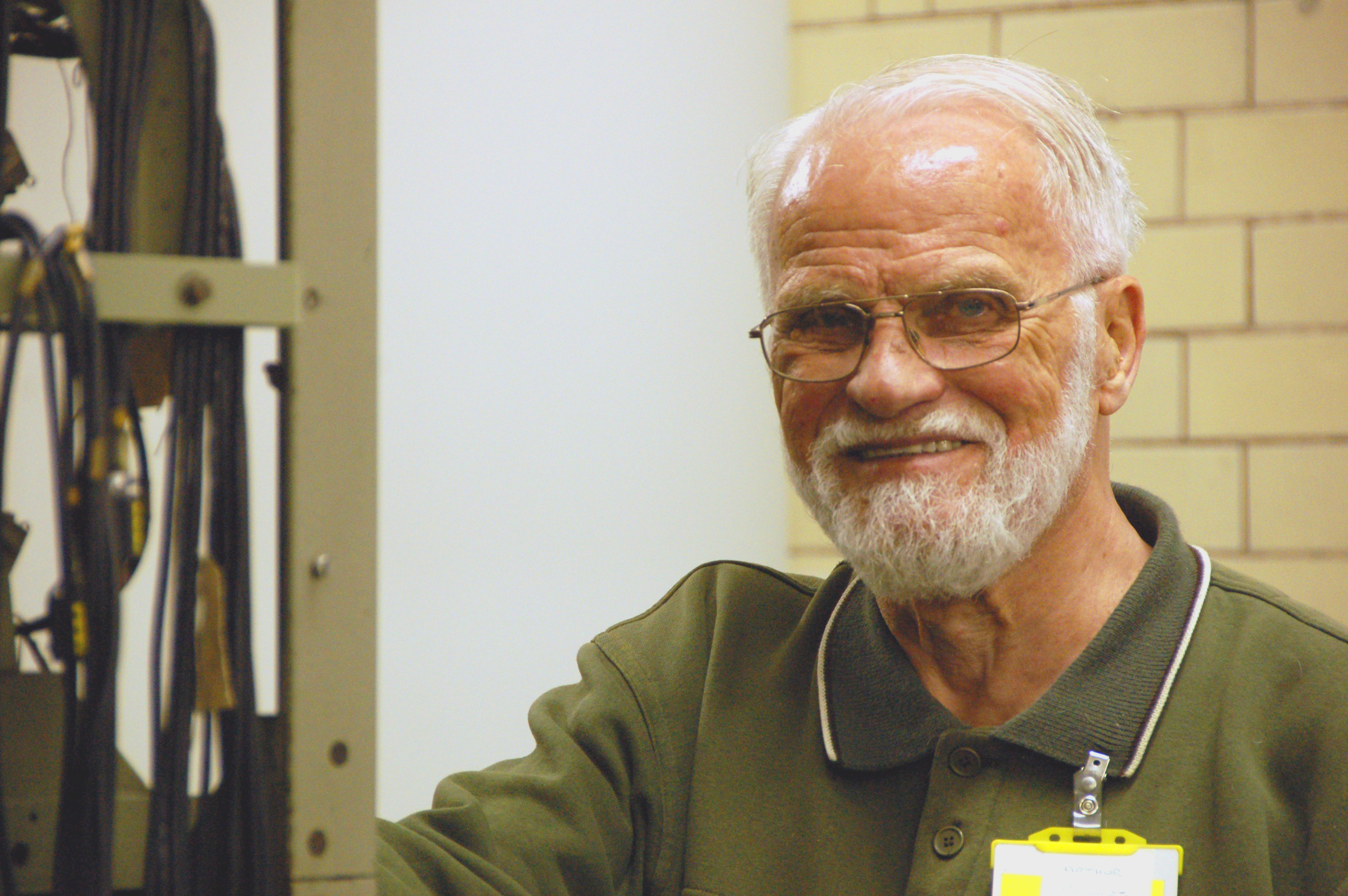

In January Doron Swade succeeded to the chair of the Society. Doron is probably best known as the builder of the Babbag e Difference Engine at the London Science Museum a century and a half after it was designed. But to the Computer Conservation Society he is affectionately known as the person who conceived of the Society in 1989 and co-founded it that year with the late Tony Sale. Doron is an engineer, historian and museum professional. He was Curator of Computing for many years at the Science Museum, London and later Assistant Director & Head of Collections. He has studied physics, mathematics, electrical engineering, control engineering, philosophy of science, man-machine studies, and history at various universities. He has published four books (one co-authored) and many scholarly and popular articles on history of computing, curatorship, and museology. He is an Honorary Fellow of the British Computer Society and of Royal Holloway University of London. He was awarded an MBE in 2009 for services to the history of computing. Doron’s term of office was intended to have started in 2019, but for various reasons he was unable to take up the reigns at that time. So, for the last year and a half, David Morriss, our outgoing chairman has stepped into the breach. We are grateful to David for his work as chairman over the last few years and in particular, for extending his term of office in our time of need. 101010101 In December the BBC Radio 4 Today programme carried an interview with the Dean of the National Cathedral in Washington DC, one Rev. Randolph Hollerith. His brother Herman was Bishop of Southern Virginia. But the answer to the obvious question is “yes – great grandfather”. Who knew? 101010101 A forthcoming television re-make of The Ipcress File will feature a scene in which the Ferranti Atlas computer features. CCS members Toria Marshall and Dik Leatherdale have been trying to assist the production team in the seemingly impossible task of re-creating this iconic machine having first been contacted by Emeritus Prof. Jim Miles of the University of Manchester. 101010101

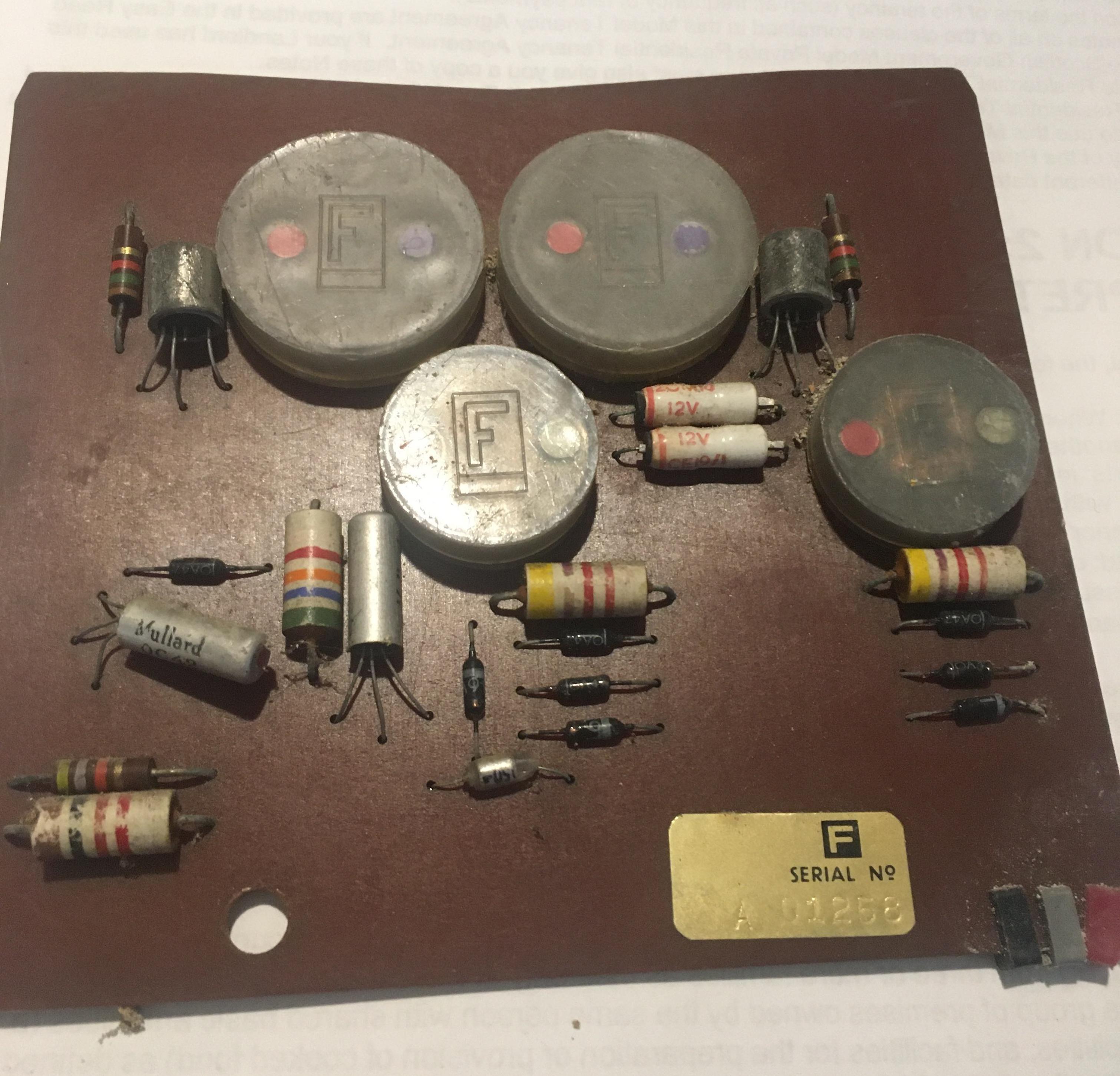

From time to time the Society is contacted to answer enquiries from members and non-members alike. In December we were contacted by Greg Michaelson who asked us to identify this PCB on behalf of a colleague. Dik Leatherdale, Simon Lavington and Alan Thomson all piled in before Chris Burton definitively identified it as a board from Orion I. The round plastic objects house miniature transformers used in the Orion I logic circuits. 101010101 News from our good friend Herbert Bruderer that his two-volume Milestones in Analog and Digital Computing is now available in an English translation. Details at www.springer.com/de/book/9783030409739 |

The Cray Y-MP EL : An OverviewClive England

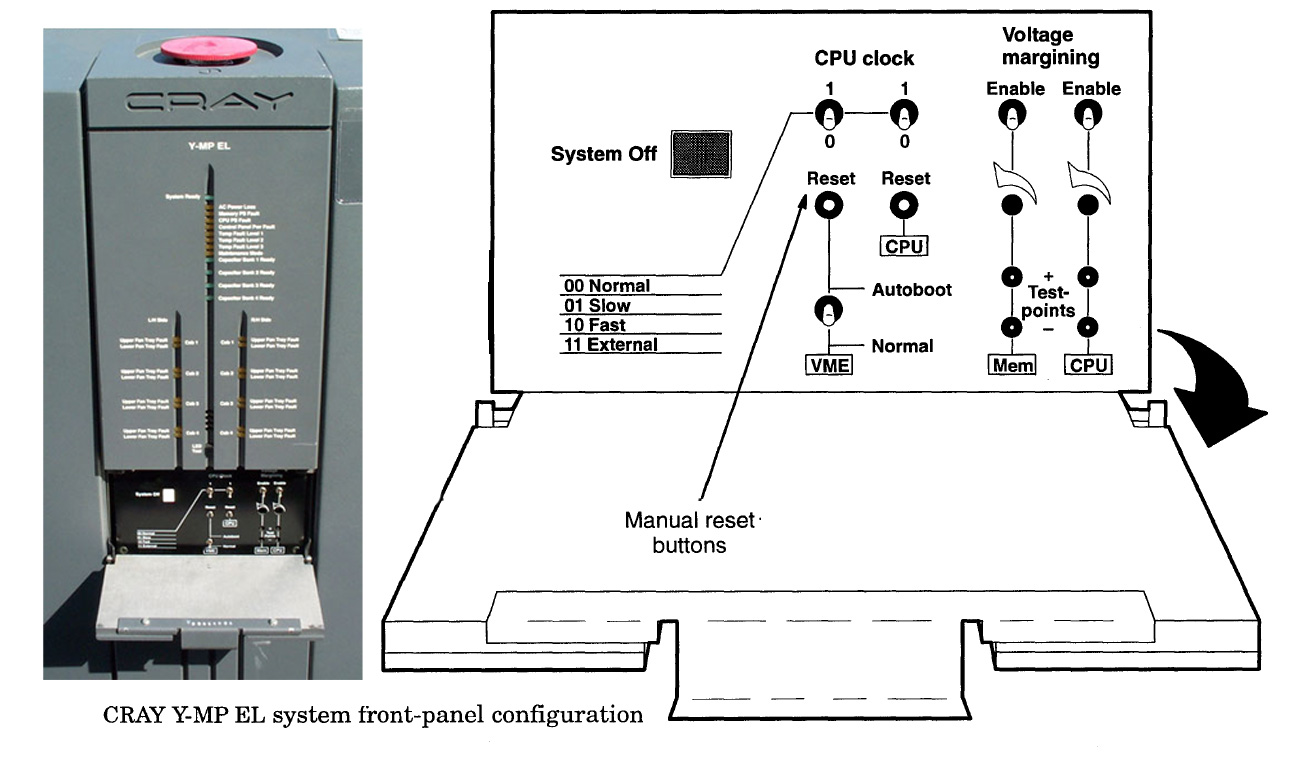

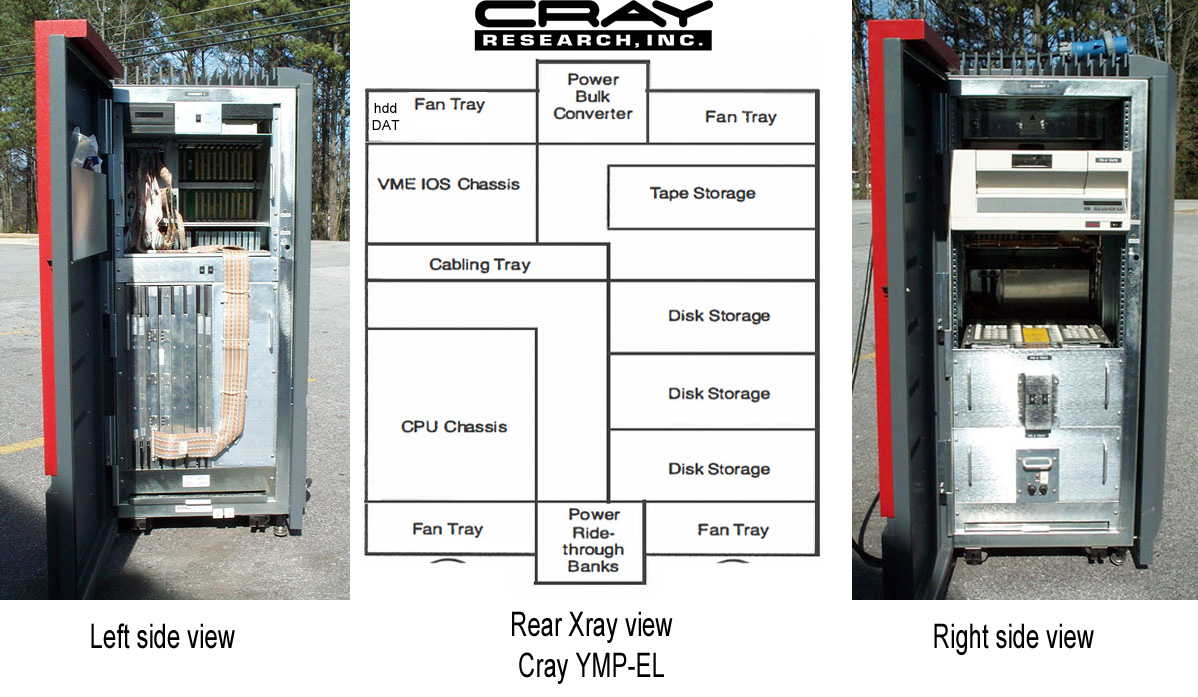

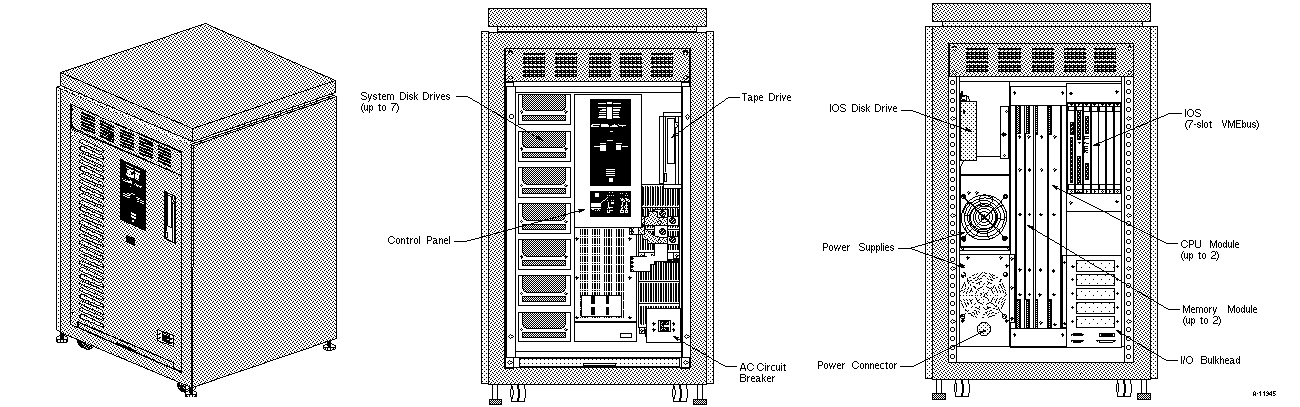

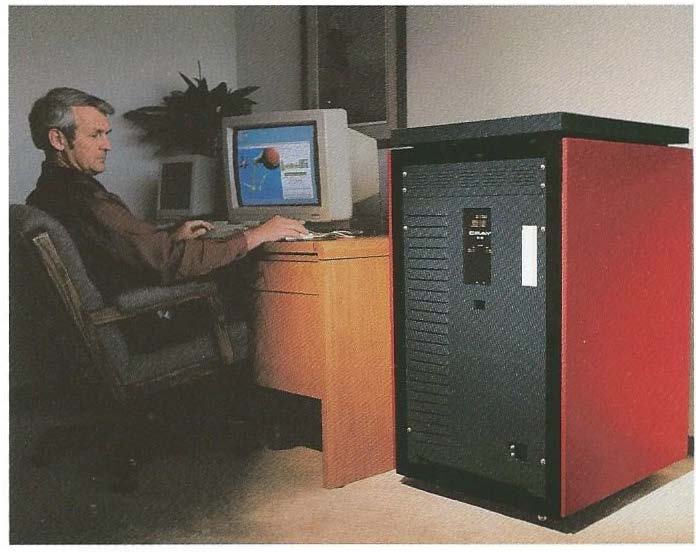

At the end of a virtual tour of The National Museum of Computing, I saw an old friend. Standing quietly by the very impressive Cray-1 from the Royal Aircraft Establishment was a grey and red cabinet about 5 feet tall with a big red button on top. This diminutive Cray supercomputer is the self-contained, air cooled, Y-MP EL. This “Entry Level” machine provided a hardware and software environment replicating its much larger and more expensive Cray cousins. During the 1980s the Cray-1 successor the Cray XMP, and later the Cray YMP, provided the backbone of scientific supercomputing around the world. As chip technology progressed in the early 1990s, along with an economic slump, the need for smaller more cost-effective scientific computing platforms became apparent. Starting with the Cray Y-MP EL (Entry Level) designation, later systems became the Cray EL98 with more memory and processors. A smaller cabinet version was released as the Cray EL92. Key features across the EL range were vector-enabled processors, multiple sets of fast registers, fast and parallel memory interconnects but with no virtual memory or paging. The famous designer Seymour Cray split from Cray Research to pursue the Cray-3 project in ’89 while Cray Research developed the XMP then YMP and later C90 & T90 architectures through the ’80s and ’90s. Cray supercomputers were sold for their ability to crunch huge number sets in the shortest possible time. Delivering accurate weather forecasts, shaping a aircraft wing, or solving complex financial equations, all rely on high performance processors to deliver timely results. The EL described here is one of the smallest Cray machines but does have Seymour Cray-inspired architecture in its bones. A technology start-up called Supertek created a CMOS implementation of the Cray XMP processor architecture with a view to complementing the Cray XMP at a much lower price-point. Launched in ’89, Supertek managed to sell about ten Supertek S-1 machines and then started development of a Cray YMP processor implementation. After Cray acquired Supertek in 1990, the S-1 was briefly released to market rebranded as the Cray XMS. In practice the processor emulation was well received but the input / output subsystem (IOS) was not robust enough for production supercomputing. A couple of years later in ’91 the Cray Y-MP EL was released; an evolution of the XMS with a similar VME bus based input / output subsystem but emulating the later Cray YMP processor architecture. Offloading input / output operations to a specialist processor was a tactic deployed across the Cray system range, supported by direct memory transfers between IOS processors and main memory. This solution also facilitated two separate system development threads allowing the Cray range to be refreshed by alternating the release of systems, with new main processors or new ranges of storage and network devices. Hardware tour Weighing in at 635 kg with a 6K Watt power consumption, the Cray EL had a one square metre footprint and could be delivered through most doorways and lifts. One Eastern European university however did have to strip the skins, remove the disc and tape drives then have six large workmen manhandle the chassis up two flights of stairs.

At the front of the chassis the most obvious feature is the big red emergency power off button on the top of the cabinet. As this button is just about at leaning height for taller folks some sites put a machined Perspex tube around the button, other sites used part of a two litr coca-Cola bottle or even an upside-down painted Coconut shell to prevent unexpected shutdowns. Just below the red button are the system fault and status lights and a concealed flap covering the reset, master clear button and a couple of diagnostic switches. Looking at the specific hardware internals, both the left and right sides of the main cabinet are hinged to reveal, on the left the input / output subsystems (IOS) cage above the processor and memory boards and, on the right, the disc and tape storage devices. The left-hand side of the cabinet holds the two types of processor found in the EL. The full width VME card cage, near the top, could be sub-divided into up to four independent input / output subsystems. Each IOS consisted of a Master I/O Processor, an I/O buffer board and customer selection of VME peripheral cards. The first IOS also connects to disc drive and system service QIC or DAT tape and provides the console connection point for the system. The IOS disc contained the VxWorks based IOS operating system, the main system configuration files and diagnostics. The main processor and memory cards in the lower cage ran the Unix-based UNICOS operating system to perform the actual customer workload.

The tall cards at the base of the left side of the system are the main processors and memory boards. The connection between the master IOS and first processor was via a 40 Megabytes per second rainbow-coloured ribbon “Y1” cable. An Ethernet card was also included in the first IOS with fibre optic and hyper-channel cards also available. Early EL systems were available in one to four processor versions with memory sizes from 256 to 1024 Megabytes with a memory bandwidth of 4.2 Gigabytes per second. The main memory cards, available in four sizes, have multiple banks with error and port conflict resolution hardware. Each processor in the system has four parallel memory ports. Each port performs a specific function, allowing different types of memory transfers to occur simultaneously. To further enhance memory operations, the bidirectional memory mode allows block read and write operations to occur concurrently. Memory logic hardware included SECDED (a kind of extended parity) error detection and correction to avoid silent corruption errors. When a single bit error is detected, the correction logic resets that bit to the correct state and passes the corrected word on. When a double bit error is detected, to avoid unpredictable results, a memory error flag in the running program exchange package is set and referred to the operating system.

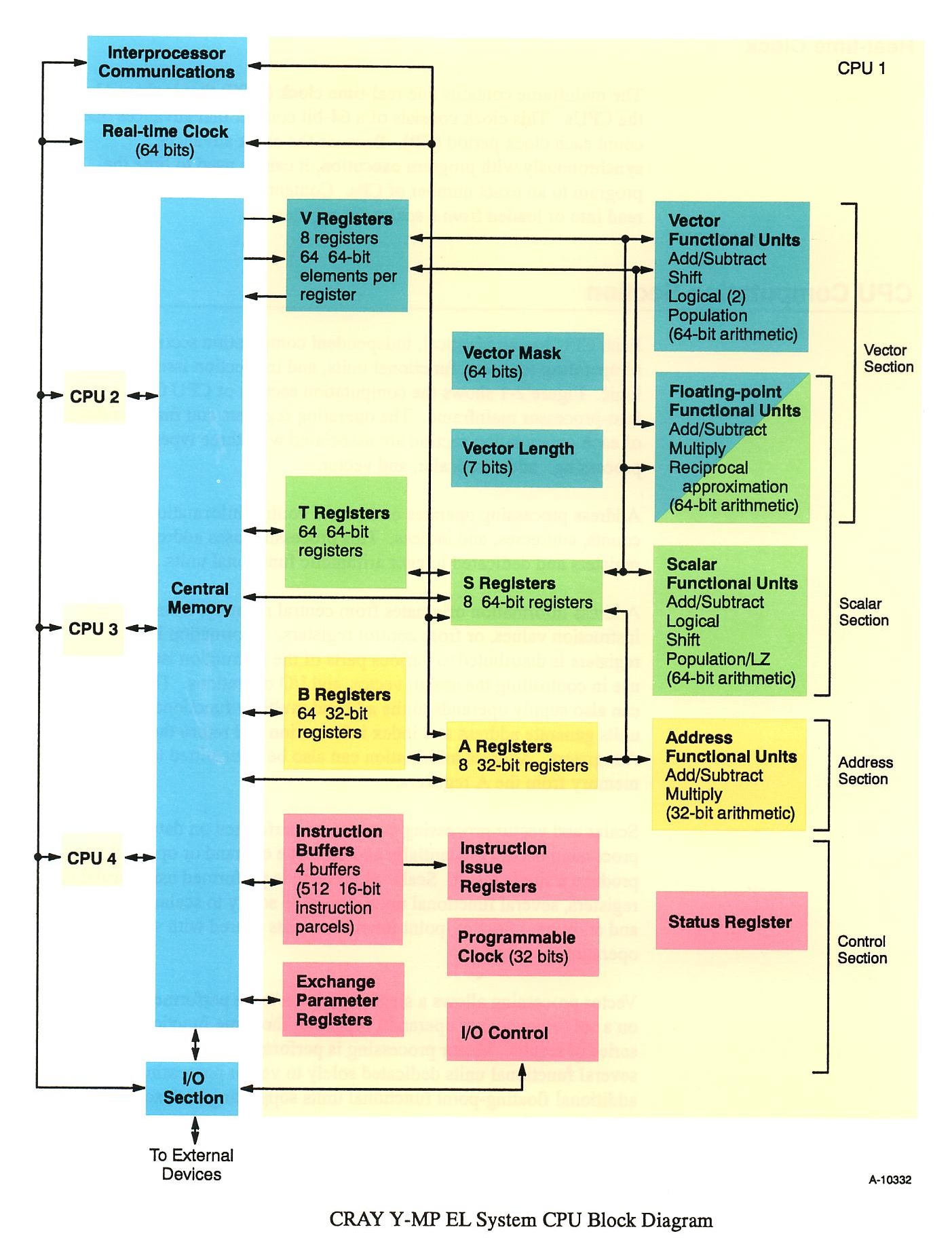

The right-hand side of the cabinet holds the main hard drives and the optional tape units. An autoloading low-profile reel tape drive or a cartridge 3480-type drive and an 8mm helical scan tape system were the tape options offered. Early EL systems had two types of main storage hard drives, IPI for high performance or ESDI for larger storage size. Only two IPI drives fitted in a drive bay but eight ESDI drives could be put in the same space. Later EL94 and EL98 systems had SCSI-connected DD5s 3.1Gb Seagate disc drives. Disc array sub-systems with hot spares and auto remapping of flaws were available. Customers could later purchase a SCSI card to attach external generic hard drives. Customers could order double or triple wide main cabinets to hold extra input/output processors and peripherals. Cray processors The Cray processor internal architecture was built to be fast, concurrent and feature rich. To the modern eye, the system looks very old-school with its different registers for different data, no virtual memory or fast context switching but the system was designed to match the larger Cray YMP processors whose development heritage traces directly back to the innovative Cray-1. The later Cray architectures built on the Cray-1 processor design by adding more parallel processors, more and longer vector registers, and more “other” registers. A flexible instruction set combined independently and concurrently, in the right conditions, scalar, vector and address data calculations. Each of the processors included multiple registers to hold data and functional units to process data (see diagram). Vectors, being a list of values, play a critical part in achieving high performance calculations. The eight sets of V registers are each 64 words long and 64 bits wide. The processor is able to stream from memory directly to the V, T, A, or B register sets, between vector registers, via a functional unit and back to memory or to an output device. These streaming operations in combination with pipelined functional units allow up to 64 calculations to be performed in hardly more time than it takes to pull the values from memory.

The system memory is arranged into sections and banks to allow simultaneous and overlapping memory references. Simultaneous references are two or more references that begin at the same time; overlapping references are one or more references that start while another memory reference is in progress. Special processor operations such as gather/scatter, bit population count and leading zero count supported specialised software used in signal processing at certain valued government customer sites. An Exchange package and Exchange registers combine to hold the state of a processor at the point that a processor switches to another task. Having many registers made context switching a slow operation, predisposing the machine to run best with large industrial strength workloads. The interplay of registers, memory references and functional units resulted in a complex processor architecture. Constructed from CMOS Very Large Scale Integrated (VSLI) circuits the processors ran with a 30 nanosecond clock speed delivering a peak performance of 133 Megaflops (millions of floating-point operations per second) enabling an EL98 system to achieve a prestigious one GigaFlop rating. What it was like to service these rare and complex machines? When I was a software support engineer for the UK Cray EL systems, a customer asked “Why did the process accounting fail to run last night?”. I started looking around the system using the remote support modem for access and the system was behaving normally. However the cron.log file showed many time-triggered but rejected cron jobs. The immediate cause was the cron job queue reaching its maximum of 25 outstanding jobs. This was the reason that accounting process had not run but what was filling the cron queue? It was clear from the ps command output that olcrit, the on-line processor health check command was the problem. Run hourly, from cron, system diagnostics would run for a second or two to check the system health. Here were 25 sets of diagnostics queued waiting to run. The olcrit diagnostic is attached to each processor in turn, using the ded(icate) command. Each olcrit program would start, wait until its nominated processor exchanged to the kernel then would run processor functional checks for a second and exit. Here was a queue full of diagnostics waiting to get into processor-2 that was stalled and not exchanging into kernel space. A site visit was arranged to check the contents of the program counter in the stalled processor by running another diagnostic program on the master IOP. This showed that the processor was hung on a gather/scatter instruction, one of the two instructions (test/set being the other), that would cause early EL processors to stall. As a result of this incident and processor hangs at a couple of other sites, all the “PC-04” chips on every EL processor board where manually replaced at customer sites. Software tour The Cray EL had two operating systems; in the IOS a real time operating system based on VxWorks, and in the main processors UNICOS. VxWorks controls the I/O peripherals and most importantly, after cold startup checks were completed, it started, stopped and diagnosed the main processor boards. During the start cycle the IOS would halt the main processors, push a boot loader into main memory and deadstart the main processors. The boot loader located the root filesystem and the main operating system, UNICOS, would be started into single user mode. The final step was jumping to multiuser mode and the system was ready for service. Cray EL systems ran UNICOS, a “full cream Unix”, that was bit compatible with the UNICOS run on the full size multi-million dollar Cray systems. Designed to be multi-user from the outset UNICOS has a full suite of user resource controls able to track the memory usage, processor usage, process count, disc quota and even the quantity of printout generated. User controls are affected via a user database with fields and security procedures way ahead of the traditional Unix “/etc/passwd” password file. Full system process cost accounting was available for sites that needed to charge end users by resource usage. Process accounting was also a helpful diagnostic tool for retrospectively exploring system performance issues. Complaints of system slowness over the weekend or unexpected long program run times could be placed into context for system administrators and scheduling decisions adjusted. Extensive system logs and routine diagnostic runs would detect any emerging hardware errors before they became serious. UNICOS came pre-installed on the system drives but could be reinstalled from scratch using the maintenance tape system. Early system updates included a tedious 10 hour UNICOS re-linking stage but this was mitigated by using “chroot” on an alternative root filesystem and later replaced with a binary distribution for major upgrade cycles. Another Unicos feature, inherited from bigger systems and optional for the EL was Multi-Level Security (MLS). MLS allowed Unicos to isolate groups of users into specific compartment(s) and level(s). Special commands and privileges were needed to move files between sub-sections. Restrictions on the output of commands such as ps and ls helped separate communities preventing back channel communications. The concept of an all-powerful root user was replaced by various system administration privileges. Popular with defence and government sites MLS UNICOS was not widely used by commercial customers. Built into UNICOS was an early disc volume manager providing a couple of valuable performance features. Combining large numbers of disc drives into a single usable volume was facilitated by volume configuration options in UNICOS applied at boot time. Filesystems could be arranged so that file directory meta-data resided on faster drives with the bulk storage on larger slower drives. The concept of using large chunks of memory as a solid-state drive had been a part of Cray architecture since the early ’80s. On the EL this feature appeared as the ability to add large chunks of memory as filesystem ldcache space improving the performance of high turnover system or work files. Filesystem storage could be managed with individual user quotas and the Data Migration Facility. DMF pushed files on to a tape drive and released the disc space. Migrated files could be automatically recalled when later accessed. Used mostly on the larger sites that had access to an automatic tape library, DMF was a complex system that caused some support headaches, but it could extend storage space from Gigabytes to Terabytes. Database-controlled DMF was no substitute for extra working storage but, given the expense of disc drives at the time, it was very cost effective when storing and using large datasets and for system backups. Backing up the residual file stubs and the DMF database, not using DMF, was considered good practice. The real gem of Cray software was the Programming Environment. Inherited from the established Cray range, compilers were provided for C/C++, Fortran, Pascal and ADA backed by a host of development and code analysis tools. Getting the most out an expensive Cray machine involved carefully looking at how application programs performed. Some programs would port easily from workstations and immediately gain a moderate speed up but that alone would not justify the extra cost of a Cray. Techniques such as vectorisation, parallelism and use of optimised libraries could make dramatic program performance improvements. To ease the transition Cray compilers had features that would automatically recognise performance opportunities or the programmer could add directives that would invoke parallelism or direct the compiler to perform loop combining and loop unrolling. Tools such as :

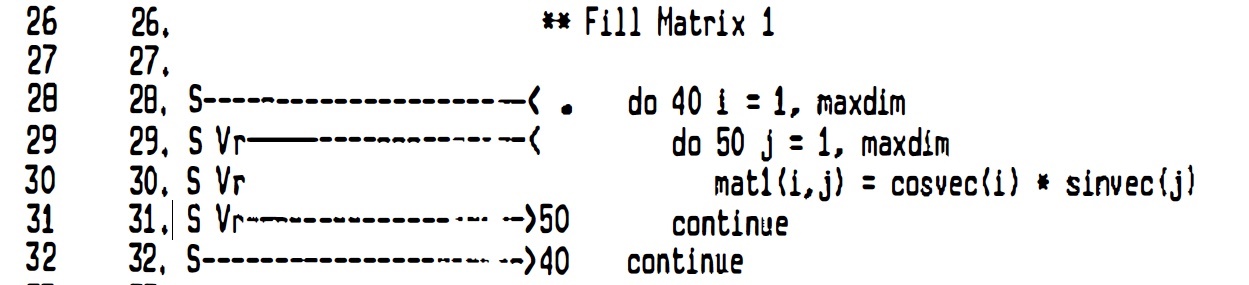

With the correct compilation flags enabled, the compilers listed the performance areas identified in a program. This snipit shows the CFT77 compiler identifying the ‘S’ scalar outer loop and ‘V’ vectorizable inner loop that has been unrolled ‘r’.

First seen in Cray language compilers, OpenMP exploits many parallel performance features. Today OpenMP is widely used on modern Intel-powered systems; by including a few well-chosen OpenMP directives, a program can get the most out of modern processors without having to rewrite the whole program as a multi-threaded application. UNICOS provided a rich runtime work administration environment on all systems large and small. Centred around the Network Queuing Environment, an industry standard batch queuing service, systems could be loaded up with work from a diverse user base and run at full steam 24/7. Features such as checkpointing could allow long running jobs to be frozen and restored. System administrators trying to both fill the machine and provide the best turnaroun d for priority clients used “The Fair Share Scheduler”. The workload balancing conundrum resulted in multiple work scheduling and queueing arrangements. One site reported “Fair Share is the standard scheduling algorithm used for political resource control on large, multi-user UNIX systems. Promising equality, Fair Share has instead delivered frustration to its users, who perceive misallocations of interactive response within a system of unreasonable complexity.” The Los Alamos site analysts went on to create an Opportunity Scheduler. Opportunity Scheduling differed from Fair Share scheduling in three key respects:

An insider reports on features that annoyed Cray EL customers The site later reported “Opportunity Scheduling first made its appearance in October 1995 and has been running on all of our production systems since April 1996. The scheduler is running on two YMP-8s, a J-32, and a T-94. The modified kernel supports both Fair Share and Opportunity, Scheduling, switchable via a runtime flag.”

Evolution of the Cray EL into EL92, EL94 and EL98 Published in 1993, Cray Channels (a Cray marketing and technical magazine) announced upgrades for Cray EL customers. Modules could be replaced to upgrade systems into a Cray EL98 or new systems purchased with more processors and memory. “Cray Research’s strong-selling Cray EL entry level supercomputing system, 130 orders booked in its first full year, got even better this spring. In March the company introduced the Cray EL98 system, with twice the peak performance of the Cray EL configurations at the same price and with four times the central memory capacity. Because the Cray EL98 system uses the same chassis as Cray Research’s previous entry level system, customers who already have a Cray EL system can easily add or exchange modules to upgrade their processing power. Cray Research currently has 11 customers upgrading to Cray EL98 systems; seven of these organizations are upgrading to fully configured eight processor systems. With up to eight processors and 4096 Megabytes of central memory, the Cray EL98 supercomputer provides a peak performance of over 1 Gigaflops. To deliver the highest levels of sustained performance, the balanced architecture of the Cray EL98 system provides four Gigabytes/s of total memory bandwidth in combination with one Gigabytes/s of l/O bandwidth to storage” ... “Pricing for a two-processor Cray EL98 system begins at $340,000 in the U.S.” The ex-Swansea University Cray EL on display at The National Museum of Computing was upgraded from an EL94 configuration to an EL98. The Cray EL was repackaged into the least expensive Cray system ever. “With a U.S. starting price of $125,000 and a footprint of about four square feet, the EL92 is limited to two processors and 512 Mega Bytes of memory.” The parts and arrangement looked similar to the EL but were not interchangeable. The EL92 later doubled up to the EL94. The power and cooling was suitable for an office environment but the system was just too big and noisy to go under a desk.

In conclusion The Cray EL range provided an expanded customer base and complemented the larger Cray systems providing an identical software development and production environment. Adjusting the company from creating a handful of big complex systems each year to shipping two or three systems each week did cause big manufacturing changes in Chippewa Falls. Never a mass-market machine the Cray EL established a range of air-cooled departmental systems that brought supercomputing power to mainstream engineering, climate, academic and financial customers. With an eye to the future of bigger systems one 1993 press release noted “The EL92 systems can also be ordered with the CRAY T3D Emulator, a software tool that helps programmers using the CF77 programming environment to develop and test applications for the CRAY T3D massively parallel system.” The Cray EL range was a sales success: “In 1992, Cray Research booked orders for 130 CRAY EL systems, exceeding its original target of 100 orders for the year, according to Bob Ewald, CEO of Cray Research. Cray Research’s entry-level systems brought in more than 70 new-to-Cray Research customers in the aerospace, automotive, chemical, financial, construction, utilities and electronics industries, as well as universities and environmental and general research centres.” Personally, in eight years of working at Cray Research, I was only ever a small chip in a big machine, but I always had a proud feeling like a car mechanic who works for Lamborghini. Dealing directly with high value customers allowed me to make a difference and taught me some valuable customer service skills. I was always learning and never knew enough. But I valued the really clever colleagues throughout the organisation who worked together to deliver exceptional computers into customer production environments.

By the end of the ’90s the air cooled EL range evolved into the Cray J90 and, at the top of the computing power tree, the big vector T90 machines were giving way to the massively parallel Cray T3E systems but that’s another story. I would be very interested to hear your stories about the Cray machines you came across and what problems they solved for you. |

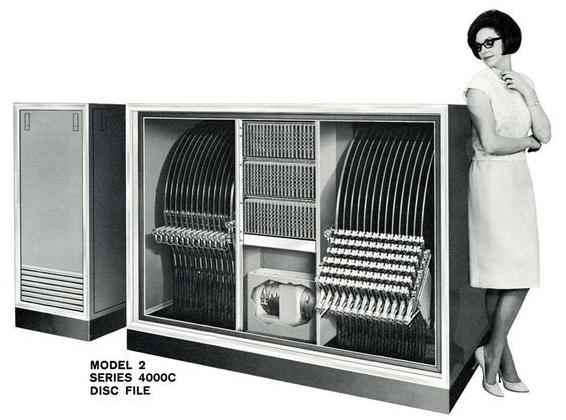

Bryant disc file at BMC Service, Cowley, Oxford

John Harper

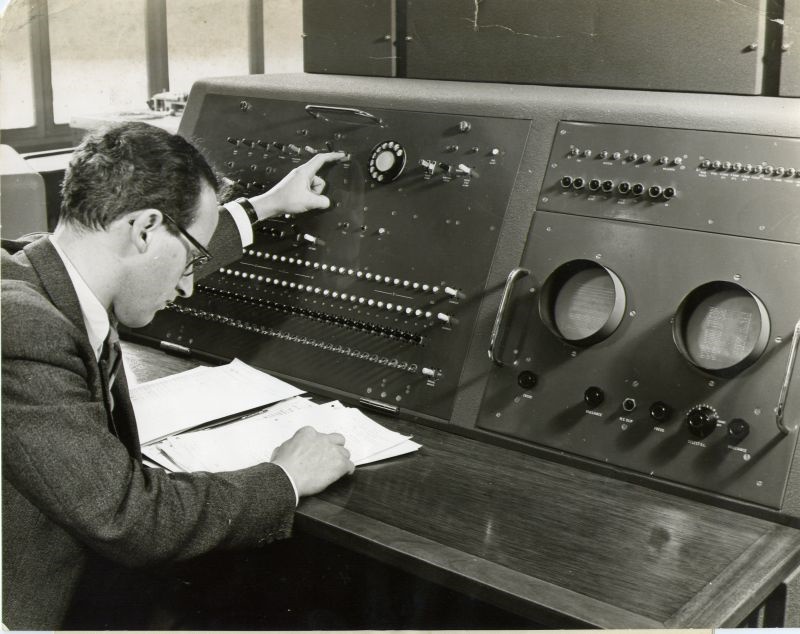

An ICT 1500 (being a re-badged RCA 301 with RCA 501 tape decks and the Bryant 4000 series disc) was installed at British Motor Corporation (BMC) Service in Cowley to improve BMC’s ordering and stock control system. Previously BMC had a Powers Samas round-hole 65-column card instillation. At that time there was a very large area laid out with tables where stocks of pre-punched cards were held for every single spares item which might needed around the world. Ladies walked around with order forms selecting the card that matched the part number on the order. There were also stocks of cards for every dealer worldwide. These were taken to a card punch and quantities were punched in and verified. The cards were then processed by two Programme Controlled Computers (PCCs) to add prices etc. Finally these cards were fed into tabulators with output going to the pickers and dispatchers When the 1500 was installed, all the information relating to every single BMC part number was transferred onto the Bryant disc. Did BMC realise how risky its operation was with absolutely no contingency? At this time there was no other company or customer site in ICT with the same configuration that it could use as a back-up. For the system to work all the hardware had to work. This was not just the Bryant disc but all four tape decks to carry out a sort, the Analex printer and all the other parts of the configuration. What BMC did to reduce the risk was to have a maintenance contract with a semi-permanent ICT field engineer on site at both the morning and evening shifts. I was one of them. Looking back on it, perhaps the customer did not realise what a risk they were taking. Their archiving and back-up strategy was perhaps adequate but somewhat unusual. The computer room manager took tapes home with him. With no resilience in the configuration and no similar configuration within ICT to act as back-up, this put a great deal of pressure on the field engineering staff to keep the whole configuration working. We were constantly being told how important it was to BMC and we were told how much a minute of loss would cost. Fortunately the 1500 was very reliable, including the disc file. Sadly when a Bryant disc was fitted to the ICT 1900 a year or two later, I understand that there were many outages but I do not have any details. The full spec of the Bryant disc can be found at www.computerconservationsociety.org/rd/bryant0.htm. A single disc is on display at TNMoC.

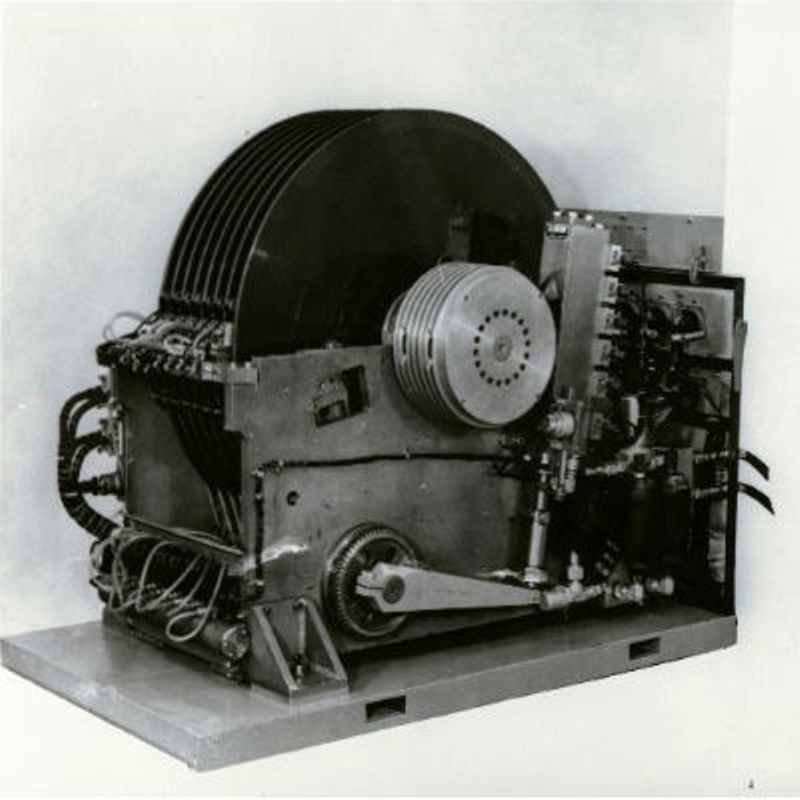

The one at BMC rotated at 900 rpm on 100A three-phase mains to two powerful motors. One drove the disc and the other in a second cabinet housing drove the hydraulic pump. This image of the main mechanism gives one a good idea of what a beast the mechanism was with the discs measuring 39 inches in diameter. The ICT1500 system was in use from about 1961 to 1965 when a ICT 1900 replaced it. The way in which the heads were positioned on one of the 256 tracks was interesting because the movement was driven by eight hydraulic linear actuators effectively connected in series. Each section had a piston that had hydraulic fluid on each side with a valve that opened or closed each section. The throw of each piston was made up of units 1, 2, 4, 8, 16, 32, 64 and 128 units. This assembly was attached to a heavy arm as seen in the lower right of the image above. In this way tracks 0 to 255 could be selected though eight electrically controlled valves. This all happened very quickly and it was why such a powerful hydraulic pump was needed. However, on one occasion there was nearly a major loss of service when a pump was being changed. The hydraulic pump on other machines around the world had been giving trouble but caused no actual breakdown at BMC. Bryant sent out a supposedly improved replacement pump. On later Bryant disc drives in ICT a more conventional gear pump was fitted. The pump replacement was planned to take place, taking not more than an hour or so. We started after the late shift finished around 11pm and had the whole night to complete the work, if needed. The pump was a swash plate 1 pump with a ‘six shooter’ barrel with a piston in each aperture. A control system moves the swash plate to keep the output of the pump at the necessary controlled pressure and flow rate. The first part of the job was to drain some of the hydraulic oil, disconnect the oil pipes and electrical connection before unbolting and removing the pump. The next stage was to fit the replacement. Something did not quite look right as we fitted the replacement but we pressed on to the testing stage. After checking and checking again for about two hours we still could not get the pump to work. I had some experience of hydraulics with tractors on our farm which helped. Our boss, who was also present, would not accept that the pump ha been assembled incorrectly. There were several quality stamps on the pump and on the paperwork so our boss was not convinced that we should negate the warranty by opening up the pump. 1. A swash plate is a round plate fixed to a constantly rotating shaft. The plate can be tilted resulting in a reciprocating motion at each point of the circumference. Around the plate, parallel to the shaft are arranged slave shafts which move up and down as the plate rotates (a camshaft achieves a similar result). In this case the slave shafts drive pumps. The degree of tilt can be controlled and, when the plate is perpendicular to the drive shaft, there is zero motion in the slaves and hence no pumping takes place. See also www.computerconservationsociety.org/rd/bryant1.htm . The breakthrough came later when our boss had to take a call on the other side of the building. As soon as he was out of view we took the top off the oil reservoir and when he returned we could see that air bubbles were coming up in the oil reservoir. We then opened up the pump and reversed the two halves using the old pump as a pattern. We then ran all the tests successfully and left at about 3am. Nothing was ever said except that somebody thought that we should have called in Bryant. I can imagine what the customer would have said if we had caused a three or four day outage with garages all over the world asking what had happened with their urgent “Vehicle Off the Road” (VOR) orders. We did not tell the customer how close we came to putting the system off the air. There was pressure on the computer room managers to meet a turnaround time. This applied in particular to VORs orders. Generally when we had a problem with a particular unit, BMC managers became very agitated and telephoned our field engineering managers. We then had to explain what the problem was and to reply to many telephone calls which took us away from fixing something. This did not help the matter but just made the repair take longer. Another problem we found was a slight increase in disc flaws occurrences. The software in the disc had the ability to redirect access to an alternative record so this was not much of a problem operationally. These ‘redirections’ were recorded and passed to us for examination. We had offline test software that checked for every faulty record and provided a map of the suspect ones. We already had a similar map on hand, produced when the disc was first installed. Comparing the two we discovered that new flaws were about a track away from the originals. So the question was what had caused this? During maintenance the heads were moved away from the surface of the discs for cleaning (see photo above), the original position having been marked. The heads were subsequently returned to this marker. Once the heads were back in place the clamping bolts were tightened with a torque wrench. It was later discovered that every time the bolts were tightened there was a slight shift in position. The marker used before the heads were retracted was the one to which they were returned. So at each maintenance session the heads crept out of perfect alignment cumulatively explaining why the flaws had appeared to have moved. Once understood, the answer was to always return to an absolute reference point. We had the BMC workshop make up a jig that we used for reference at each maintenance period. Shifting the heads to their original position was a little difficult, but once done the original flaw map was put back as it had been at installation. We then had no more error reports. There was one difficult period. Every so often BMC carried out a stock take which meant adding another shift in the night and weekend. Because the system was working reliably it was agreed that one of us would be on telephone standby during these additional shifts. Fine, but my opposite number went sick so perhaps another field engineer should be called in. But that would take time coming to terms with a new installation. A proposal made to the customer was that I could come in for about 10 hours every day and be on telephone standby from home that was about 40 minutes away. The only urgent problem that I anticipated was that the tape deck heads needed to be cleaned whilst I was not on site. I therefore trained a couple of the operators to do this which they were quite capable of doing but ICT were not fulfilling their contract and it was against the rule that field engineers were the only ones allowed to work inside the covers. In the event all the BMC stock-taking work went through within the time allotted. Neither the customer of ICT field engineering management said anything, although both organisations were fully aware of what had happened. Everyone was happy especially me, who earned a massive number of extra hours of overtime most of which was ‘out of hours’ on double pay. The daily maintenance was carried out every morning before operators took over the machine The main task was to clean the disc surfaces with a long paddle attached to a cloth pad on the end that was soaked in a liquid: I think Isopropyl alcohol. With rear covers off, the disc was run at a slow speed and all the surfaces wiped clean. The heads were held away from the surface until the discs were up to speed. This was considered to be a dangerous operation, therefore an electrician from the site was to stand by whilst this operation was carried out. This happened most mornings but there was sometimes no second person around. I wonder what H&S would make of this today? Less often the heads had to be cleaned and to do this the whole head assembly was swung out as can be seen on right hand group in the photo above. The BMC disc drive was not fully populated as in the image and had fewer discs. However all models had a clock track disc. |

CCS at the Movies – December 2020

Dan Hayton

2020 was different! Having been “volunteered” to present the December film show the usual thoughts crop up: theme, sources and the audience attention span.

I already had a theme nagging at the back of my mind from the 2020 lecture programme. Should we look at LEO computers through their presence on film? How did they look at themselves and how others look at them at the time and from the perspective of today? I could save some effort by taking up Peter Byford’s suggestion and using our links with the LEO Society. The Computer History Archive was one source which provided copies. YouTube hosts some further clips but we had seen a lot of the material in various presentations over the years. Would the audience be prepared to sit through them again? Were they of suitable length? Could one put a new spin on the details to drum up interest? There were some contemporary clips from the 1950s and 60s both professional and amateur together with interviews of programmers which would make a mixed bag of subjects. 2020 has turned out to be technically interesting and left the CCS and many similar societies relying on our own industry for continuing contact and “entertainment”. Programmes of lectures, PowerPoint or equivalent, has been successful using Zoom, Teams and Webex, to name the ones that have come my way, with some including video clips or swapping to the modern equivalent of the epidiascope and back to the laptop camera. We have made use of the web by pulling down clips for inclusion in previous presentations at BCS or the Science Museum. But how would domestic bandwidth deal with download and upload of video in the same breath? What would be the least risky way of proceeding? In any case I’m a user of LibreOffice (the Government likes it for returns using its spreadsheets) so I would be using that software to create and present the show. To avoid too much buffering, I decided to download the clips to my laptop and use the facility to insert the videos into the PowerPoint presentation which also manages links to websites reasonably well (don’t click more than once). Those features enabled me to create a straightforward presentation which wouldn’t lead to more than the simplest finger trouble. This route produced a 674Mb file which contained the whole thing. Reading it off the local hard drive reduced any chance of performance problems at that stage. One more problem loomed on the horizon. OpenReach had not quite arrived in my village and was still up telegraph poles and under the street as the day approached. Anyway, my broadband had been consistently iffy even for committee meetings so I arranged to borrow my daughter’s flat and broadband for the afternoon of 17 th December. Fortunately, she and her partner were both out at work and the cat stayed asleep, as usual. There was the usual hiccough sharing the screen. Somehow there were two copies of the presentation which conflicted and froze until they were disentangled. Unfortunately, in view of the source of the material, it wasn’t permissible to record the event so here is a list of the clips used. Searching YouTube using the titles is easier than typing the URLs. The list includes clips both used an unused in the presentation.

Note : CfCH = Centre for Computing History The event drew an audience of 159 from far-flung places but although I thought of things unsaid afterwards I believe it went OK. |

Book Review : The IT Girl: Ann MoffatFrank Land

Many of the readers of Resurrection must have known of or crossed paths with Ann Moffatt during their own career in computing, perhaps knowing her under her birth name of Ann Hill, or her name after her first marriage to Alan Leach, Ann Leach. Her career encompassed working for the MET Office with early Ferranti computers, employed by Kodak in the UK with their first business computing and then when she married and had children joined Steve Shirley’s Freelance Programmers, quickly making her mark and rising to head the systems wing of the company, finishing head of a team of 250 programmers. Interested in the advances of computing technology she joined Iain Barron’s Modular One company, but left to join BP’s software and consultancy offshoot Scicon, before being head-hunted by the major Australian software house CSA involving a move to Australia to take a senior role in implementing multimillion dollar systems at AMP, at that time a major force in the Australian Insurance industry and Australia’s largest company. After more senior positions in Australia including working for the newly unified Australian Stock Exchange, she formed her own very successful company, Technology Solutions, reverting to some of the notions she had picked up in the UK with Freelance Programmers. Interspersed with her activities as an employee she was active in the British Computer Society heading the Advanced Programming Group and being chosen as a member of the BCS Council, the first women to attain that position. Equally in Australia she took an active role in the ACS and worked closely with Australian Universities and with Cyril Brookes at the University of New South Wales. Her reputation led to international acclaim resulting in many invitations as keynote speaker at conferences world-wide, as well as making major contributions to the International Standards Organisation’s eight-layer communications protocol. She was awarded an honorary PhD and was only the second individual and first woman to be inducted in the Australian Computing Hall of Fame and in 2015, the Piercey Hall of Fame. Retired to Queensland she continued being an active promoter of women’s role in technology and educating the local community on the benefits technology can provide. Her autobiography is a full-life account covering her domestic and professional life. Although the longest passages describe episodes of her professional life in Information Technology these passages are intermingled with aspects of her domestic life including marriage, motherhood, even aspects of her sex life and descriptions of her travels on business and vacation. In many ways the story is that of her life-long struggle against a world much of which is dysfunctional. Ann emphasizes the widely prevalent misogyny she encounters in much of her professional life, which far too often questioned her ability despite her success in seeing systems and projects to successful conclusions, projects which in other hands were failing badly. But equally she comes up against social and health dysfunction including marriage to an ex-soldier who despite surface charisma, turned out to be a pathological madman. Her wellbeing was interrupted by several serious accidents including a fractured skull early in her career. One slightly surprising omission in what is a no holds barred whole-life memoir is any account of political interests or activities. The book is written in two modes. Her earlier life from infancy to mid-career is written almost as a virtual reality account putting the reader into the episode she is reporting including supposed dialogue. Not all of that is convincing to this reader. Her later life is narrated as more straightforward reporting. Her narrative recounting of the systems she worked with, disentangling the work of her dysfunctional predecessor and working in dysfunctional organisations, is often enthralling and reminds us of the many problems involved in designing and implementing successful technological change in the imperfect world in which we live. As a reader whose career with IT and LEO, though starting a decade earlier, sometimes intersected with that of Ann, I have some grumbles. Nowhere in the book, despite her clear interest in the history of IT, does she mention the role played by LEO, who helped to define some of the same standards she herself introduced to the organisations she worked for. The MET Office pioneered meteorological computing on LEO I before switching to Ferranti, her employer Kodak in the UK carried out its first business system, its payroll on a LEO II Service bureau, and in Australia the first insurance company to install a large mainframe computer was Colonial Mutual with a LEO III before AMP took the plunge. And her father was employed by J. Lyons & Co until his retirement after rising to be a senior manager responsible for all building activities. A more serious concern is of Ann’s naming and shaming work colleagues described as incompetent, frauds, drunks, or misogynists. Whilst I don’t question the justice of the shaming I wonder if it is appropriate in an autobiography to name individuals who cannot defend themselves against the charges. But this does not deny the importance of Ann’s life story as one of the heroes of the computer age who in addition to demonstrating the importance of understanding in detail the business the technology has to serve and the capability of the technology to provide that service, has to be constantly aware of the rapidly changing landscape of both business and technology. And Ann succeeds in her major intention of showing that gender should not restrict the roles played by women in their career choices and progression. |

Contact details

Readers wishing to contact the Editor may do so by email to

Members who move house or change email address should go to

Queries about all other CCS matters should be addressed to the Secretary, Rachel Burnett atrb@burnett.uk.net, or by post to 80 Broom Park, Teddington, TW11 9RR. |

Obituary: George DavisRoger Johnson

It is with regret that we have to report the death of George Davis, a founding member of the CCS committee, on 28th December 2020, just short of his 97 th birthday. He was a very congenial man to work with and will be fondly remembered by colleagues who knew him. His deafness was an obvious burden for him but was borne with characteristic good humour. He started working on computers in September 1950, after studying mathematics at Cambridge and working on radar during the war. He joined English Electric as part of the team at the National Physical Laboratory developing the Pilot ACE. He helped with the software and hardware development, and then set up and led a dedicated maintenance team, which eventually showed that Pilot ACE could run very reliably if systematic procedures were applied. George later worked on the design and development of the Deuce and KDF9 computers. George was active in the BCS for many years. He was an active member of the Croydon branch committee and also served on the BCS Council and on its Branches Board. George served as CCS Meetings Secretary from 1995 to 2003, after which he ceased to be a member of the committee but continued to attend its meetings for some years afterwards. Such was the esteem in which he was held, nobody seemed to mind. That he was a man of considerable charm and good humour probably helped. |

CCS Website InformationThe Society has its own website, which is located at www.computerconservationsociety.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. At www.computerconservationsociety.org/software/software-index.htm, can be found emulators for historic machines together with associated software and related documents all of which may be downloaded. |

Obituary: Arthur RowlesDoron SwadeIt is with great sadness that I report the passing of Arthur Rowles. Arthur led the restoration of the Science Museum’s Elliott-NRDC 401 computer from 2004-2009 – this as part of the Computer Conservation Society’s restoration activities. After a career in the computer industry, starting at Marconi in 1953, (migrating to BTM, ICT, and then ICL), Arthur left ICL in 1974 to join the Science Museum as head of a small unit later called AVEC (Audio-visual, Electronic, and Computer-based Displays) that designed, built and maintained working exhibits. AVEC was responsible for the working exhibits in the new computing gallery, ‘Computing then and Now’, that opened in December 1975. Exhibits included visitor-interactive logic gates and an LED animated panel display illustrating hardware priority interrupts for servicing peripherals. Arthur took special pride in this exhibit that he had devised. The gallery also featured one of the first live public terminals, slaved to a mainframe in the adjacent Imperial College. Arthur’s earlier connection with the Museum presaged his staff appointment: in the early 1960s he gave weekend Science Museum lectures on computing to older secondary school students.

Arthur developed a passion for computer music – programming a Rockwell AIM-65 computer to capture and print all nuances of musical score in traditional notation. The daily commute from the Isle of Dogs, where Arthur lived, to the Science Museum, became burdensome, especially as the time could be better spent programming. He decided that he would retire, and he went to see the Director of the Museum, Dame Margaret Weston, and told her that he wished to leave. To plan an orderly transition, Dame Margaret asked Arthur ‘when are you thinking of leaving?’ Arthur replied, ‘after lunch’. He stayed an additional year and retired in 1983. Arthur was a hands-on problem solver who relished the challenges of design and fault-finding. He travelled down to London every fortnight for the 401 restoration. The 401 work was his farewell to the Science Museum. Arthur was 94 and had tested positive for Covid-19. He passed away on 29 December 2020. He was reading New Scientist to the last. |

50 Years ago ....Dik LeatherdaleBecause of lockdown, our usual summary of the events of half a century ago is not available this time. But it would perhaps, be inappropriate not to recall the introduction of decimal currency to the UK and Ireland which took place on 15 th February 1971. How did that go? And how did it compare with the year 2000 crisis of just over 20 years ago? The first thing to say is that the problem was much, much smaller. Computers were not then as pervasive in commerce as they now are. That said, the resources to deal with the problem were in very short supply – trained programmers were like gold dust so the balance of problem and resource was comparable. One aspect of the Y2K crisis was the search for a “magic bullet” – a software fix which would allow existing programs to continue working with little or no change. In the IBM mainframe world, it was claimed that the magic bullet did exist by using spare bits in dates held in character form. At least one Tandem installation simply wound the CPU clock back by a few years and applied an offset at application level. But that was unusual. In 1971, the London Atlas ran a bureau service for external organisations which included some classic data processing batch applications written in a COBOL-like language, Atlas Commercial Language (ACL). Like many contemporary COBOLs it supported pounds/shillings/pence fields in which money amounts were held in binary old pence. All the calculations were done in old pence, but input from cards and output to printers required conversion from/to £sd. The obvious solution was to amend all the programs to replace the £sd fields with decimal fields and to write one-off programs to process all the carried forward data on magnetic tape dividing all the money amounts by 2.4. But that would have needed a great deal of effort and Atlas was planned for withdrawal in 1972 anyway. My colleague Roger Trendell, who then had responsibility for the ACL system, came up with a bright idea. Change the code generated by the compiler so that it treated £sd fields like decimal fields. But that only solved part of the problem, those data conversion programs still had to be written. But Roger then went further. Why not change things so that pounds and new pence fields on the cards were converted into binary old pence, continue to do all calculations in old pence and make the contrary adjustment on printing? Despite the inevitable possibility of rounding error, all the customers accepted this, being unwilling to stump up the cost of proper conversion. So, problem solved! Magic Bullet? You bet! |

Forthcoming EventsSociety meetings in London and Manchester have been suspended in view of the COVID-19 pandemic and are unlikely to be resumed during the current season. However, we have made arrangements to give lectures over the Internet using ZOOM and, at the time of writing, the first of them has been delivered successfully. Using ZOOM has the advantage that many members who might not be able to attend in person can join in and the indications are that attendance exceeds the normal number by a considerable margin. Moreover, it is possible for us to invite speakers from faraway places to tell us about matters which, in normal times would not be accessible to us. Seminar Programme

Seminars normally take place at 14:30 but this may vary from time to time. It is essential to use the BCS event booking service to reserve a place at CCS ZOOM seminars so that connection details can be sent out before the meeting takes place. Web links can be found at Web links can be found at www.computerconservationsociety.org/lecture.htm . For queries about meetings please contact Roger Johnson at r.johnson@bcs.org.uk. Details are subject to change. Members wishing to attend any meeting are advised to check the events page on the Society website. MuseumsDo check for Covid-related restrictions on the individual museum websites.

SIM : Demonstrations of the replica Small-Scale Experimental Machine at the Science and Industry Musuem in Manchester are run every Tuesday, Wednesday, Thursday and Sunday between 12:00 and 14:00. Admission is free. See www.scienceandindustrymuseum.org.uk for more details. Bletchley Park : daily. Exhibition of wartime code-breaking equipment and procedures, plus tours of the wartime buildings. Go to www.bletchleypark.org.uk to check details of times, admission charges and special events. The National Museum of Computing Open Tuesday-Sunday 10.30-17.00. Situated on the Bletchley Park campus, TNMoC covers the development of computing from the “rebuilt” Turing Bombe and Colossus codebreaking machines via the Harwell Dekatron (the world’s oldest working computer) to the present day. From ICL mainframes to hand-held computers. Please note that TNMoC is independent of Bletchley Park Trust and there is a separate admission charge. Visitors do not need to visit Bletchley Park Trust to visit TNMoC. See www.tnmoc.org for more details. Science Museum : There is an excellent display of computing and mathematics machines on the second floor. The Information Age gallery explores “Six Networks which Changed the World” and includes a CDC 6600 computer and its Russian equivalent, the BESM-6 as well as Pilot ACE, arguably the world’s third oldest surviving computer. The Mathematics Gallery has the Elliott 401 and the Julius Totalisator, both of which were the subjects of CCS projects in years past, and much else besides. Other galleries include displays of ICT card-sorters and Cray supercomputers. Admission is free. See www.sciencemuseum.org.uk for more details. Other Museums : At www.computerconservationsociety.org/museums.htm can be found brief descriptions of various UK computing museums which may be of interest to members. |

North West Group contact details

|

||||||||||||

Committee of the Society

|

Computer Conservation SocietyAims and ObjectivesThe Computer Conservation Society (CCS) is a co-operative venture between BCS, The Chartered Institute for IT; the Science Museum of London; and the Museum of Science and Industry (MSI) in Manchester. The CCS was constituted in September 1989 as a Specialist Group of the British Computer Society (BCS). It thus is covered by the Royal Charter and charitable status of BCS. The objects of the Computer Conservation Society (“Society”) are:

Membership is open to anyone interested in computer conservation and the history of computing. The CCS is funded and supported by a grant from BCS and donations. Some charges may be made for publications and attendance at seminars and conferences. There are a number of active Projects on specific computer restorations and early computer technologies and software. Younger people are especially encouraged to take part in order to achieve skills transfer. The CCS also enjoys a close relationship with the National Museum of Computing.

|