The Differential Analyser

(courtesy MOSI)

| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Bulletin of the Computer Conservation Society

ISSN 0958-7403

Number 54 |

Spring 2011 |

| Top | Previous | Next |

ICT 1301 - Rod Brown

The project is closed until the spring. With over 4,000 PCBs full of early germanium transistors this is a wise precaution. We hope to restart work by the end of March.

Thought is being given to modifying the status of the project to allow its potential move to that of a charitable trust and its further ability to function as a fully interactive experience which we would like to offer to students of both computing and digital archaeology.

The 2011 public open day is now planned to be Sunday the 17th July.

Some initial planning is also taking place to change the 2012 public open day into an event which celebrates the machine’s 50th birthday.

News about the project can be found on the dedicated project website www.ict1301.co.uk.

Elliott 803 - Peter OnionBrown

The Calcomp Plotter is proving to be a popular attraction with visitors. Some adjustments have been made as it was found that at certain drum stepping speeds the stepper motor would miss some steps. A small adjustment in the motor position has reduced the backlash in the gears which seems to have cured the problem (and has noticeably reduced the noise made by the drum).

Work is progressing with the authentic technology interface (using minilogs) for the plotter.

An intermittent fault has developed with the paper tape punch, but it was identified as a problem with the drive electronics rather than with the punch mechanism. Fault finding was achieved by getting one of our spare punches going. The spare was in very good condition anyway and only required some light oiling to get it functioning. However a second spare seems to have a burnt out motor.

Kevin Brunt has produced a SuDoku solving programme in Elliott Algol-60. However it lost its first ″head to head″ with a real person so some optimisation is being investigated!

Pegasus - Len Hewitt

On being allowed access to the Pegasus in August 2010 repairs were 90% complete by the end of October 2010. The work will recommence in early April at which time we hope to be allowed to recruit two more project team members. When the repairs are complete the consultant who the Museum has employed will need to certify that the Pegasus system is safe to run again. However I am sure the machine will run successfully in the near future.

Discussions are ongoing with the Museum to determine the various roles of the Pegasus Working Party Volunteers.

My thanks to Peter Holland, Chris Burton, and especially to Rod Brown for all the help they have given. Also a special mention should be made of Ian Miles, the conservator for Pegasus, who has given invaluable assistance.

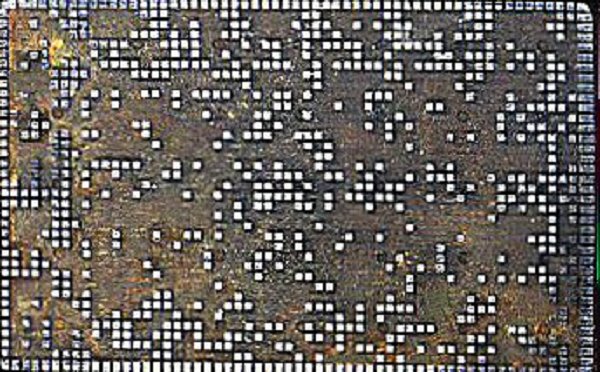

The Hartree Differential Analyser - Charles Lindsey

|

The Differential Analyser |

The output table is now in working order, except that we have no pen holder yet. Generally speaking, each time we start work on a new component, we find more ball bearings with congealed grease and various misalignments, all of which take time to fix. Most recently, we identified that the short shafts used in the bus boxes come in two flavours - those that fit and those that don’t (apparently manufactured incorrectly). The bad ones are being banished to the bus boxes at the ends.

The art of adjusting the torque amplifiers is still not fully understood. At the last attempt a cord on a first stage amplifier broke, which I hope to replace with some ancient Barbour’s linen button thread. It is suspected that stretching of the frayed thread may have been the cause of our difficulties.

EDSAC Replica - David Hartley

A promise of funding has been received and an outline plan including cost estimates and timescales has been drawn up. Work was proceeding to gather archive information. An interim Management Board has been set up consisting of Chris Burton, Martin Campbell-Kelly, David Hartley and Kevin Murrell. Progress has been made under the following headings.

Sponsorship

Dr Hermann Hauser of Amadeus Capital Partners is obtaining commitments from

potential sponsors, and a marketing brochure has been prepared. Dr Hauser

himself is likely to be a major contributor. The total sum being sought is

around £250,000.

Management

Solicitors have been briefed and are preparing documents to establish a charity,

in the form of a company limited by guarantee, to build and own the replica. The

trustees are likely to be representatives of Amadeus Capital Partners, the

University of Cambridge and the British Computer Society.

Publicity

A press release was prepared and was launched in the week of 10th January.

Stephen Fleming is acting as the project’s publicity consultant.

Research

Much archive material on the EDSAC has been gathered and the (very few)

artefacts in existence have been located for detailed examination. Chris Burton

has made an extensive inventory of the parts required and is investigating

potential sources. His colleague Bill Purvis has made significant progress with

a gate-level simulator to verify the detailed logic design.

Location

Arrangements have been made for the replica construction to be carried out at

The National Museum of Computing (TNMoC). The work will be carried out in public

view.

Project Manager

A search for a Project Manager has been started.

ICL 2966 - Delwyn Holroyd

We have recently experienced an unusually high number of new intermittent faults. This is thought to be related to the exceptionally cold weather. The load DCU is now operational again and all the intermittent OCP problems bar one have been cleared up. All the boards implicated in the remaining issue have been exchanged, leaving a backplane or inter-backplane cabling fault as the most likely cause. Once this issue has been identified we will be back where we were two months ago!

Meanwhile the 7501 terminal restoration has made great progress. We are now able to teleload its operational control program from a laptop running a George 3 emulator, via a USB to synchronous serial interface board we have developed for the purpose. The filestore containing the necessary control program for the terminal came from the George 3 service operated on an ICL 1902T at Manchester Grammar School until 1986. It’s been some 24 years since this program last saw active service, and although we don’t know for sure, probably a similar amount of time since the terminal itself was last operational. There is a picture on the TNMoC website showing the George 3 login prompt on the terminal.

Although we have still to hear directly from senior management at Elsila in St. Petersburg, I have heard from my contact that the museum’s proposal was discussed in his presence and so we remain hopeful that a dialogue can be established.

We recently had a collection of System 25 spares and technical information offered to us, including a terminal which is great news for the System 25 project. Our S25 uses the same EDS80 disk drives as the 2966, although they are known as EDS65s on the S25 due to the capacity reduction imposed by the formatting used. The items offered include an EDS80 Customer Engineers’ pack, alignment tool and card, which will enable us to replace and re-align heads on our drives. This ability is essential to keep them running in the medium to long term.

Contact detailsReaders wishing to contact the Editor may do so by email to |

| Top | Previous | Next |

There is to be a celebration of the life and work of Maurice Wilkes at Cambridge on 27th June. The main event will take the form of a series of short lectures covering Sir Maurice’s major achievements. Amongst the speakers will be David Hartley, Martin Campbell-Kelly and David Barron. A buffet lunch and a drinks reception are also planned.

The event is open to anybody with an interest. Registration is at www.cl.cam.ac.uk/misc/obituaries/wilkes/event/.

101010101

|

The Manchester Baby in its new location |

MOSI - The Museum of Science and Industry in Manchester - has now completed the refurbishment of its main building now known as the Great Western Warehouse in accordance with its former use. The building now houses Revolution Manchester, a permanent exhibition featuring six major advances in science and technology which the city has originated over the years. Computer technology is one of these themes and, of course, the Manchester Baby (SSEM) replica is its centrepiece.

www.msimanchester.org.uk/whats-on/revolution-manchester is a good place to start.

101010101

In case you missed it, 2011 marks 100 years since the founding of IBM. The website at http://www.ibm.com/ibm100/us/en/ yields much interesting historic material, most especially a video in which Fred Brooks talks about the development of System 360.

101010101

A collection of LEO artefacts has been donated to the National Museum of Computing by the Leo Society. Thanks to Peter Byford and Pete Chilvers for arranging this.

101010101

In Resurrection 51 we published an appeal from Brian Spoor for information concerning Priority Mode working in the ICL 1900 Series. Brian reports as follows -

“Some of the replies received as a result of your kind publication of my article on the 1904S emulation project were quite useful, although in some cases contradictory, in that Priority Mode just didn’t exist.

“We are slowly pushing forwards towards a release, but have been taking some time to re-visit areas of the project, with a greater understanding of how certain pieces of the hardware worked.

“We have been playing with bootstraps over Christmas/New Year (re-keying from various old documents), and we can now boot from cards or paper tape (in addition to magnetic tape and disc). What the programmers achieved in so few instructions is quite remarkable and gives us a real insight into how the hardware works. We assume that actually booting a West Gorton processor from cards/paper tape was a rare event, possibly just for very basic testing (or a real nasty problem).

“Another question that has arisen is: when did E6RM replace E6BM? We are guessing around 1973/74. We are also guessing that this is when the Priority Member(5) was dropped, probably never carried forward into E6RM.

“We have succeeded (with a little assistance) in installing a patch to E6RM to clear a bug that we discovered - where more than one 1962/1963/1964 slow drum system was attached to the system, any file allocation to the second system was always zero length (and the drum directory could be corrupted). From this, we assume that E6RM was never used/tested with multiple slow drums. There was also a problem generating EWG3 with multiple slow drums which we have also fixed.”

101010101

Reader David Harveson recalls working for STC around 1962 on a computer-based store and forward telex system called STRAD. The computer took so much power that the British Railways installation in Derby brought down the local railway signals causing considerable chaos. He also worked for BOAC on a seat reservation system, a predecessor to Boadicea. Readers recalling these systems might like to contact d.harverson@ntlworld.com.

101010101

We regret to report the passing of Sir Christophor Laidlaw. Appointed as ICL chairman during the dark days of 1982, he retired in 1984 leaving behind a transformed company. An obituary was published in The Guardian at www.guardian.co.uk/business/2011/jan/12/sir-christophor-laidlaw-obituary.

So too Ken Olsen, co-founder of DEC and its chief executive all through DEC’s glory days until 1992. His obituary in The New York Times at www.nytimes.com/2011/02/08/technology/business-computing/08olsen.html?_r=2&src=twrhp is recommended.

101010101

Alan Thomson reports that the Institute of Radiology has commissioned a book on Godfrey Hounsfield, who was one of the EMI pioneers. Alan has been contacted by one of the authors - Richard Waltham. This contact follows on from the CCS seminar on EMI computers that Alan helped organise in February 2009.

Godfrey Hounsfield is best known as the inventor of the first brain scanner - a device which revolutionised medical imaging and dramatically improved treatments and health outcomes. He developed that in EMI at Hayes in the early 1970s. Many years earlier he was one of the key people involved in the design of the early EMI computers in the late 50’s and early 60’s - and the book will include a chapter on his work on computers (the early BMC machine, the EMIDEC 1100, large scale magnetic film memory, etc). He was very much involved in the design and development of the Emidec 1100, and the innovative transistor/core logic it used - it was one of the first fully transistorised computers.

101010101

Simon Lavington’s book Moving Targets: Elliott-Automation and the Dawn of the Computer Age in Britain, 1947 - 67 is now available at www.springer.com/computer/general+issues/book/978-1-84882-932-9 and in all good bookshops (as they say). Simon’s April seminar at the Science Museum will provide a good introduction to the subject and order forms will be available providing a small discount.

101010101

In Resurrection 53 we reported the discovery of a third Ferranti-Packard 6000 computer installed in the UK. Now Peter Byford of the Leo Society has made contact to recall that in 1965 he worked on a 1904 without a 1900 Standard Interface at the AA in Holloway, London. On peeling back the ICT 1904 labels the legend “FP 6000” was revealed.

Peter’s story is confirmed by Richard Dean who was concerned with this same installation from the ICT end. Richard reports that the AA bought the machine for a cross-channel ferry booking system and ordered a large fixed disc to go with it. There seems to have been some dispute between the AA and ICT concerning the lack of any software to support the fixed disc. Plus ça change!

The problem seems to have been resolved when ICT pointed out that the application had been cancelled and the fixed disc was no longer required anyway.

Richard believes that the AA installation was far from unique and that as many as 10 FP 6000s may have been delivered in false clothing.

101010101

Evolving order codes -- or how did we get to i386?David HoldsworthI am wishing to explore the possibility of a CCS meeting in which we present several CPU architectures with a view to understanding how we got to where we are (i.e. i386 ubiquitous on the desk top), and what good ideas we might have lost along the way. I am looking for people with strong views and people who are familiar with various architectures, especially those of which I have no knowledge. Personally, I have (or did have in the past) good knowledge of KDF9, Z80, Signetics 2650, 6502/BBC micro, IBM370, ICL1900, PDP8, DEC10, Elliott 903 and lesser knowledge of SGI/MIPS, PDP11, VAX, M6800 and even a smattering of Itanium. Oh and I am not bad on i386 either. The initial idea would be to establish e-mail contact among those who might be interested in contributing to such a meeting, and then to explore its possible structure. My current perspective is that this story is at least as dismal as the VHS/Betamax saga. Areas of my ignorance that I would particularly like to cover are Atlas and ARM, and stuff pre-1960s. If you would like to register an interest, please email ecldh@leeds.ac.uk. |

| Top | Previous | Next |

Or - realities that textbooks and teachers don’t tell you. This project ventured into unfamiliar territory, in more than one sense. It was not an unmitigated success, but - “lessons will have been learned” - possibly.

|

Taking the air in Oberhausen |

By 1969, Oberhausen had been a typical Ruhr coal and iron town for over 200 years. Prominent in that history had been HOAG (or, if preferred, Huettenwerk Oberhausen Aktien Gesellschaft). HOAG was then becoming part of the Thyssen group. Its huge domain accommodated blast furnaces, a bloom and billet mill (known as the “First Heat”), and four “Second Heat” plants, producing heavy and medium plate and wire products. In the First Heat, ingots of up to 30 tons were repeatedly rolled, flipped, shunted, weighed, measured, sheared, abraded, and transformed into new, glowing shapes moving ever faster along the rollers of its long “street”.

HOAG had leased a Univac 1108 MP (multiprocessor) system with EXEC8 from Leasco. Its key task was to run real-time production optimisation programs, reflecting processing data emerging from the plants. The Second Heat plants were in effect the First Heat’s customers, and they in turn met the needs of HOAG’s actual external customers, including other Thyssen companies. The goal was to achieve the most efficient processing of steel pieces, assessing and prescribing the characteristics of pieces in process to match the priorities of orders and their specific requirements, while minimising scrap. This ambition implied message-handling computers in the plants, linked to the 1108, and passing data to and from processing stations within each plant.

|

A Univac 1108 |

As if that wasn’t enough the 1108 also had to take over administrative applications from two IBM 360-40s, some of them legacies from IBM 1400 Series, and to support additional such applications.

Lesson 1. The wording of contracts requires very careful scrutiny, particularly if they are subject to translation:- and must not simply be left to lawyers.

There were actually three Leasco/HOAG contracts. Apart from the lease, Leasco committed to sell a quantity of anticipated surplus 1108 time on behalf of HOAG. That agreement was bedevilled by an ambiguous definition of a machine-hour. Was it an hour of existence time or of processor time? This contentious ambiguity was compounded when time sale customers included some using the non- multiprogramming EXEC2.

There was also a contract with Leasco Software, a loss-leading first in mainland Europe, to supply about 20 programmers, to reinforce the customer’s own IT staff, for upwards of a year. This was partly motivated by a curious but prevalent stereotype among German programmers of the time: an aversion to adulterating their CVs with experience on anything other than IBM 360 systems.

Lesson 2. Before transferring staff to an unfamiliar foreign environment, research its legal and social circumstances and requirements, and prepare and budget to satisfy them:

A prudent prospective project manager will verify or demand this. I did not. Upwards of 20 Leasco staff passed through the project. Local administration for many months consisted only of a pre-installed and rarely supervised German project secretary. Short-term rented accommodation, even on a two-year lease, was hard to find, and beset with unfamiliar, quirky conditions. The project soon became de facto involved in the local estate agency, property management and furniture/appliance markets.

Each team member had to be navigated through a Kafkaesque labyrinth of German officialdom, laboriously learned and ultimately including, most formidably, the Finanzamt, for both corporate and personal tax delinquencies. Negotiating at a clear disadvantage with Finanzamt officials will dispel any doubt that the German sense of humour is no laughing matter.

Eventually, rather late, a Leasco project manual was compiled, covering everything from the basics of steel production to petty cash purchase procedures. Among other consequences, the lack of administrative support created considerable distraction from the team’s tasks at HOAG, which the latter’s management treated with commendable patience.

Lesson 3. Pick appropriate people for the project:

To HOAG’s managers, Leasco’s project staff build-up during the long winter of 1969/70 was disappointingly slow; they said so, often. Leasco had no German technical staff, and there were difficulties in finding people of requisite skills willing to relocate promptly for one to two years to the literally gritty Ruhr, particularly those with at least some German language capability.

The Leasco team, all male as it happened, two of them Ph.Ds, a minority married or with families, was drawn from the UK and the Netherlands. It included Univac EXEC 8 specialists, seasoned IBM and Univac application programmers, especially in COBOL, operations research programmers and others with minicomputer and assembler experience. Most were deployed to reinforce the systems, applications and OR departments of HOAG’s IT division, headed by Herr Direktor P, a veteran of Zuse, like some of his senior colleagues. Four of the Leasco team formed a new, embryonic IT department - messaging systems, which worked closely with HOAG’s telecommunications department. The first such system would be in the First Heat plant. For a time, two programmers from Univac were also assigned to HOAG’s systems department, where a major preoccupation was the tortuous definition and development of a complex Data Dictionary, worthy of Wittgenstein, to be used by all HOAG’s mainframe applications.

Lesson 4. Pick appropriate equipment for the task:

|

An IBM 1800 |

Communication within the plants would be telegraphic, with faster, synchronous links to the 1108MP. Clearly a controlling minicomputer was needed in each case, as yet unsourced. Considering the broad requirements and the hardware market at the time, the messaging team enthused over the newly launched DEC PDP11. Unfortunately, Thyssen had a policy to lease computers; DEC did not do leasing. Thyssen had another IT policy: any suitable system if it’s IBM, which did lease. The team glumly surveyed the available IBM offerings, old, current and imminent, and selected IBM 1800 as the least bad option. This was an obsolescent derivative of (in its day) the generally better known IBM 1130, viewed by some in retrospect as an early, desk-mounted personal computer for serious users. The 1800, a rack-mounted, 16-bit machine with up to 32k words of memory, had a multi-programming executive (MPX), and hardware extensions specifically for process monitoring and control.

Meanwhile, Herr T, manager of the First Heat plant, had decreed some specific requirements for his messaging system. It should maintain a database of all data pertaining to each piece in process, with some history, and be able to display on screens in his Zentrale plant office variably selected data related to each piece; for he had visited plants in Japan and observed such marvels. Unfortunately, these requirements exceeded the capabilities of the standard disc storage devices on the 1800, which also offered no video display terminals available as standard, and further lacked a telegraphic multiplexer. It seemed well-equipped for process control applications, for which, unfortunately, HOAG’s plants were not amenable.

It emerged that IBM 2311 disc subsystems and 2260 video terminals could be interfaced to the 1800, but lacked software drivers. With difficulty, sufficient detail of MPX was extracted to allow the team to develop drivers and simple file software. A compatible multiplexer was identified from a Swiss firm and again, driver software was planned, adding further to the implementation task

A steel plant is, or was then, hot and dirty and manned by horny-handed operatives, usually heavily gloved. In-plant terminals would need to be robust. In the First Heat, there would be over 20 terminal positions along the street. For delivery of data, a Siemens receiver-transmitter terminal was selected, which punched and interpreted paper tape 5-hole code on continuous card stock. New (numeric) data arising at each terminal was input, following the received data on card, by a customised telegraphic device incorporating an appropriate number of large rotating thumb-wheels (also sourced from Switzerland).

Lesson 5. Program Development begins when a full functional specification has been agreed: - a key pillar of the Caminer canon of good practice.

First Heat manager Herr T talked fast and deliberately heavy north German plattdeutsch. This complicated communication. Imagine a German, carefully school-educated in English, discussing detail with an excitable Geordie. Herr T would countenance no intrusion in his plant’s future by minions of Herr Direktor P’s insidious empire. For his part, the Herr Direktor insisted he had no territorial ambitions in the First Heat. Herr T, however, busily running his plant day to day, also declared he was in no position to predict in detail its information requirements in 12-18 months’ time, nor even with certainty the number of terminal positions along the First Heat “street”, or processing path. Yet this key task, he insisted, could not be delegated.

The messaging team, having pondered this impasse, decided that the First Heat application would, necessarily, be entirely table-driven, and hopefully its model would translate readily to later Second Heat systems. There would be a table of “terminals”, including the minicomputer itself, the Zentrale VDUs, and the 1108MP; a table, or dictionary, defining all data items possibly pertaining to steel pieces; a table of message types, specifying source and destination(s) and sequence of data items for each type. A terminal would receive only data items pertinent to that station’s operation. All these tables would be modifiable from the two 2260 terminals in Herr T’s Zentrale. Mindful of performance issues, the application, like the MPX extensions, would be written in Assembler. A German specification document was written on this basis. The Herr Direktor was relieved. Herr T was delighted; IT autonomy beckoned (until something went wrong). He even arranged, without warning, a triumphant evening presentation of “his” system to the company’s somewhat bewildered Aufsichtsrat, the HOAG workers’ supervisory board.

Lesson 6. Manage project progress closely:

“We will have project meetings when they are needed,” declared the Herr Direktor, politely but firmly.

“But how will you know?”

Within the Leasco team, a revolving, honorary leader was appointed for each HOAG IT department. They attended brief, weekly, after-hours meetings in the Leasco office above a pharmacy in downtown Oberhausen before adjourning to the adjacent Freddy’s bar. This procedure provided a regular overview of the project’s overall status and Leasco’s part in it.

Scene: Lunch in HOAG’s handsome Werksgasthaus, or management restaurant, as guest of the Herr Direktor:

“So, Herr Knight, how is progress with the First Heat system?”

“I estimate two or three weeks late, but no matter.”

“??!!”

“Well, since the optimisation application on the Univac is a few months late, and without it the First Heat system can’t meaningfully function ....”

“???!!!!”

“You didn’t know that?”

Responses from the 1108 to messages from the plant system did not need to be split-second, but undue delay would be unfortunate; unless treated and moved, the steel piece in question would weld itself to the rollers, with cumulative consequences. The optimisation programs, developed in Fortran V, needed to be both clever and quick. Predictably, the mathematicians had concentrated on clever. In practice, the piece would have been moved on anyway, untreated, thus defeating the purpose of the plant system. There was anyway no budget or provision for resilience in the plant system.

Despite occasional revelations of this kind, HOAG’s IT Division never adopted regular project progress meetings, nor was there any discernible formal monitoring of project progress.

Lesson 7. Maximise continuity of personnel on the project:

German IT staff, generally, rarely changed employment or domicile. Leasco’s staff generally did. There were also boundaries to the Leasco Software/HOAG contract, and to the patience of some of those staff with life in Oberhausen. That patience was further strained by the looming nemesis of unpaid German progressive income tax. This, it belatedly emerged, applied to relocation living allowances as well as to salaries, and was compounded by the Konjunkturzuschlag, a temporary counter-inflationary tax supplement. In mid- 1971, a diaspora of Leasco staff began, soon stretching from Austria to Australia, among other countries.

The messaging team decided to return to the UK and disperse. Anticipating this, two suitable HOAG programmers had been hired in the UK, with the unwitting help of Leasco Human Resources, and an 1800-experienced German IBM team had conveniently just finished another steel company’s project. Completion of the First Heat development was handed over to these newcomers in a two-day conference. Thus the messaging team never got their hands on an 1800 in anger. Was it a success? Did Second Heat systems follow? History does not record.

|

The gasholder |

In later years, HOAG changed. There was apparently an electric steel installation in the ’80s, for example. It also shrank like the steel industry across Western Europe. By 1990 the main site was gone, replaced by a vast expanse of level gravel intersected by deserted roads. Derelict and vandalised, only the elegant Werksgasthaus, the undistinguished Hauptverwaltung, or administration block and the huge, 116 meter-high gas-holder remained. They are still there, refurbished, the gas-holder an unusual exhibition centre, as features of Centr.O, one of Europe’s largest retail and leisure centres. Other surprising attractions of this landlocked amenity and aspiring international tourist attraction include a marina on the Rhein-Herne Canal, and the König Pilsner arena, popular with rock groups of a certain age. There is also Sea Life Centre, an aquarium, until recently home to a two-legged, six- armed marine mollusc with an implausible clairvoyance for outcomes in the 2010 Football World Cup. The late Paul the Octopus briefly brought global fame to Oberhausen, as HOAG, even with its innovative COR-TEN corrosion-resistant steel, never had.

Editor’s Note: Michael Knight was Leasco’s project manager at HOAG 1969-71. He can be contacted at michaelknight242@tiscali.co.uk

| Top | Previous | Next |

Following the two articles on survey analysis software in Resurrection 52 I received much correspondence from readers. As so often, the ensuing discussion wandered about and I was drawn into contemplating the humble IBM 029 card punch. And it struck me that in the late 1960s and ’70s, more or less wherever I went, there would be IBM 029 card punches; sometimes in ones and twos, sometimes in dozens. Even within ICL which operated a ban on the purchase of IBM equipment, the 029 could be found. It was perhaps, the most common piece of computer equipment of its era.

|

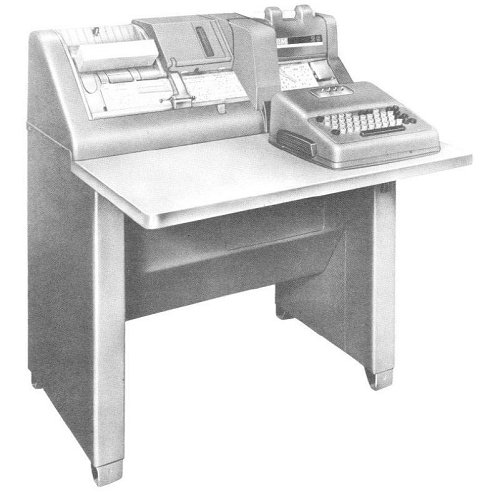

An IBM 026 card punch |

The origins of the IBM 029 go back to 1948 when the model 026 card punch was introduced. 1948 of course, was in the pre-computer age, at least as far as IBM is concerned, so we can see that the 026 was designed with the requirements of punched card office machinery in mind. The 026 had an abbreviated QWERTY keyboard supporting upper case alphabetics, numerics, space and &.◊-$*/,%#@ - 48 characters in all. It was able to punch cards, one column at a time, and the character would be simultaneously printed atop the column being punched. There was a cheaper model, the 024, which lacked the printing feature, and a verifier designated 056. Award-winning Art Deco styling was by the famous Raymond Loewy, better known for streamlined railway vehicles and even the 1950s Hillman Minx.

The circuitry of the 026 was a mixture of diodes, valves and relays. With 48V and 150V DC coursing through the machine, spillage of coffee was frowned upon with good reason.

|

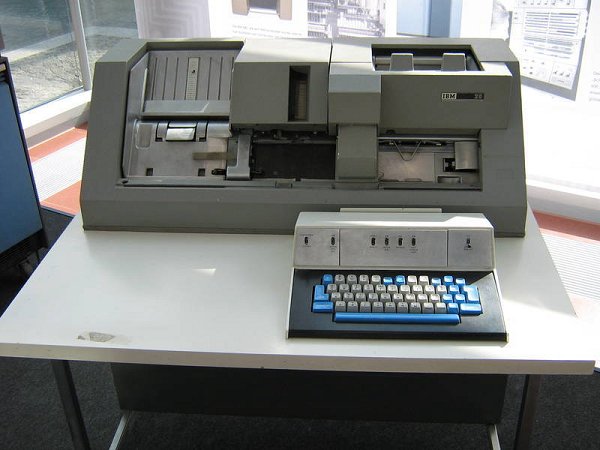

An IBM 029 card punch |

A repertoire of 48 characters might have been all very well in 1948 but by 1964, even after a bit of judicious reorganisation, time was running out for the 026. It was System 360 with its eight bit byte and generous EBCDIC character set which forced the introduction of something better. That something better was the 029 punch (and 059 verifier) which, appropriately, supported a 64 character set - not the full EBCDIC set, to be sure, but enough to cover most requirements.

Mechanically similar to its predecessor, the styling was completely revised. Gone were the rounded corners of Loewy’s now dated 024, replaced by a more “cubist”, modern look. Purchase price was reduced from $3,700 to $2,250. Internally the valves were gone and with them the 150V supply. Instead, reed relays and diodes were to be found. The reed relays were not a total success. The customer engineers’ manual warns sternly against rough handling, lest the glass in the relays should break. An early design change to wire contact relays dealt with that.

|

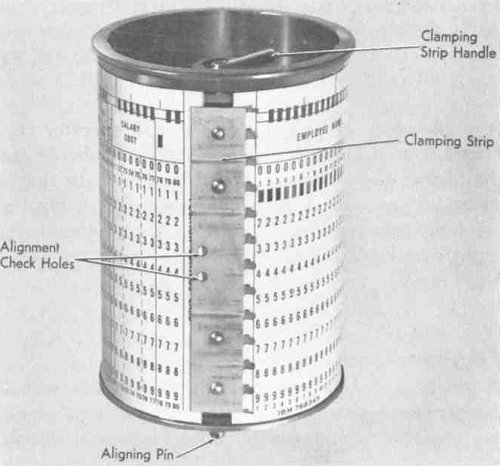

Program Drum |

Typewriters were generally equipped with a rack into which pegs could be inserted. Use of the Tab key would advance the carriage to the next peg position. The 026/029 had a similar mechanism, but instead of a rack, a card upon which encoded instructions were punched was employed. The card was wrapped round a drum which advanced in sync with the card being punched. Shift setting could also be automated as could copying from the previous card. The “program” was prepared by the user on the machine it was intended to control so the scheme appeared delightfully self-referential.

Equally ingenious was the printing mechanism. As each column was punched, the character was printed, dot matrix-style. A 5×7 matrix was employed with up to 35 wires being pressed down onto an ink ribbon (and thence to the card) simultaneously. At the other end of the wires was a postage stamp sized “code plate” with 35×64 (or 35×48) minute pillars protruding from it. Think of an array of tiny, tiny Lego bricks. By milling off most of the pillars, a mechanical, read-only binary store could be manufactured.

|

A Code Plate from an 026 |

As a character was punched, the plate moved to one of 64 positions and then pushed down. Where the pillar was still in situ, the wire would be forced down and a dot printed. Or not, as the case may be. Of course, this mechanism required considerable precision in manufacture and setting up, but then any card punch does, so it was well within IBM’s capability.-

We started by observing that the 029 could be found almost anywhere. IBM installations had them, but so did many others. One of the headaches of those days was that each manufacturer used a different card code. Often a manufacturer used different codes for different ranges of computer. Yet the 029 supported them all. How? Here, I fear, we must enter the realms of speculation. But it is at least informed speculation.

Try as I may, I can find no provision for varying the mapping from keys to hole arrangement. But, since the hole combinations were the same from one code to another - only the meanings varied - swapping the keytops cured half the problem. Which leaves the printing mechanism to be dealt with. But the printing was controlled by the code plate, so fitting a different code plate with a different binary pattern recorded upon it, changes the meaning of each key. You have to admit that’s clever.

The 029 was produced all through the era when rapid growth in the spread of batch data processing was taking place. In the 1970s key-to-tape and key-to-disc equipment began to displace the punch card as the primary data preparation medium, and by 1980, on-line applications had obviated the need for an intermediate stage between the end user and the computer altogether. Legacy systems kept the punched card going for a few more years, but the 029 was withdrawn in 1987. Was it ever bettered? This writer remembers being seriously impressed by the Decision Data 8010. The IBM 129 had a memory buffer so that the card was punched only after the operator signalled its completion, allowing errors to be corrected. But was it really a good idea to further develop the card punch in the 1970s? With hindsight, perhaps not.

How many 029s were made? Nobody seems to know. But we think it runs to hundreds of thousands. Ubiquitous? Oh yes!

I am grateful for the help of Martin Campbell-Kelly, Rod Brown and the IBM Archive in the preparation of this article. The latter’s website at www-03.ibm.com/ibm/history is a useful resource.

| Top | Previous | Next |

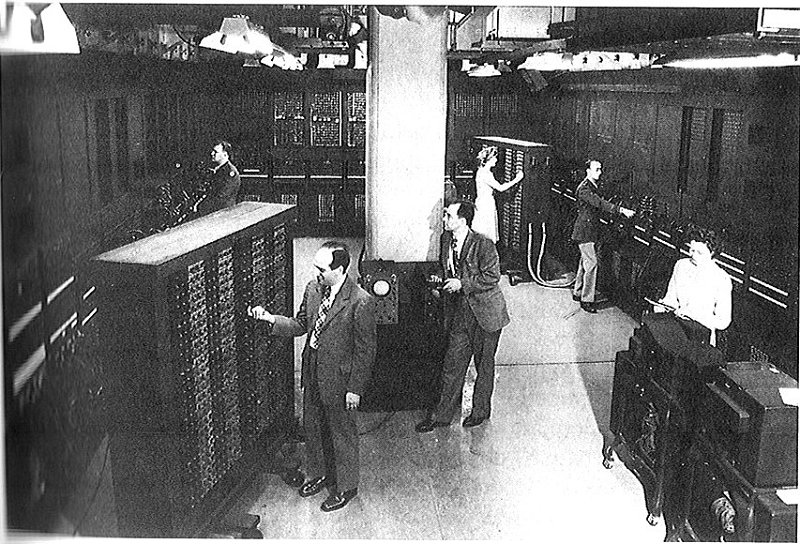

The notion of the first stored program computer is, as has often been observed, largely a matter of definition. Some would even argue that it is not even an interesting question. Nevertheless, it is often debated amongst the cognoscenti. Here Crispin Rope puts forward the claim of ENIAC, usually dismissed as “not stored program”. Readers will, no doubt, take their own view.

ENIAC (Electronic Numerical Integrator and Computer) was unveiled to the public on 14th February 1946 at the Moore School of Electrical Engineering in the University of Pennsylvania. As far as the public - or those who were interested - was concerned this was the beginning of the computer age. Scientific circles in the UK learnt more about it, the work it did, and the difficulties of programming, when Douglas Hartree’s inaugural lecture describing it was published shortly after its delivery in October 1946.

Hartree was a great expert in computation and numerical analysis and was asked to visit and use ENIAC by the US military authorities. He thus became the first person outside the Moore School and US state personnel to program ENIAC.

So far so good, but a consideration of later developments of ENIAC and just how important these were in the early history of the electronic digital stored program computer raises more controversial questions.

What most people know about ENIAC is that it weighed 30 tons and contained 18,000 thermionic valves. Also that programming it involved endless unplugging of cables and then plugging them into somewhere else among the many individual units that constituted the machine. It didn’t have a single stored program: rather this was distributed between the various units which constituted ENIAC. Furthermore, it was specifically designed to solve one type of problem - the type of partial differential equation involved in calculating the trajectory of shells. It was for this purpose that the US Army at the Aberdeen Proving Ground in Maryland had commissioned and paid for it. With this background, it did not actually matter that ENIAC took a long time to be set up for a particular type of problem. In 1943, when the design and construction of the machine began, this was all that the US military wanted. But by late 1945, when ENIAC was complete, this need was considerably less. In fact, ENIAC first operated in November 1945 and the first significant problem for which it was used involved calculations required for thermonuclear weapons (the hydrogen bomb).

ENIAC proved remarkably reliable, despite its 18,000 tubes, many other components and complex structure. It was an incredible engineering achievement. Mauchly’s understanding of the concept of the machine was wonderful but perhaps overshadowed by Eckert’s great engineering skills and concentration on getting every detail right from an engineering point of view.

What was the capacity of ENIAC? It could perform all the basic operations of addition, subtraction, multiplication, division and square rooting. But there was also a vital addition - the conditional jump. This altered the course of the program according to whether the contents of a particular store were either negative or were positive/zero. Basically the ENIAC could perform calculations perhaps some 5,000 times faster than any other means available at the time. And it was considerably more accurate and reliable.

Storage was a problem and consequently limited. Read/write storage was basically constructed of pairs of triodes so connected that only one of them could be conducting at any one time. ENIAC had just 20 stores with read/write capability for the programmer to use. Each one was of 10 decimals plus sign.

|

Mauchly is shown in the foreground. Eckert is |

But there was also a read-only store comprised in three function tables, each containing 104 12 digit decimals plus two signs (nothing was simple on ENIAC - they were numbered from minus two to 101!). The values in these stores were set by physical switches.

Input and output to ENIAC was via relatively fast 80 column punch card reader and punch. Cards were read into 80 places of the constant transmitter store with another 20 places of this being set via hand switches. Large scale intermediate results could be punched out and the cards read in again when required. Output cards could rapidly be printed off line when required.

This description of ENIAC - minimal as it is - is necessary to understand what happened in 1947 and early 1948.

Firstly, various people suggested that ENIAC could be converted to having a program in the modern sense, with the instructions being stored in the function tables (“converter code”). Mauchly and Eckert claimed to have had this possibility in mind from the beginning. Von Neumann certainly suggested it and this was followed by a more general attempt to try out such an improved configuration.

In its original mode of operation ENIAC was relatively fast and its multiplication time was actually faster than that of EDSAC or the Manchester Mark I, but not Pilot ACE. Using the converter code was significantly slower because the machine lost most of its original ability for parallel operation. For this reason ENIAC in its original form was the better suited for its original work of calculating firing tables.

Secondly, work on the hydrogen bomb became more urgent. As Stanislaw Ulam described the problem:-

“At each stage of the process, there are many possibilities determining the fate of the neutron. It can scatter at one angle, change its velocity, be absorbed, or produce more neutrons by a fission of the target nucleus, and so on. The elementary probabilities for each of these possibilities are individually known, to some extent, from the knowledge of the cross sections. But the problem is to know what a succession and branching of perhaps hundreds of thousands or millions will do. One can write differential equations or integral differential equations for the expected values, but to solve them or even to get an approximative idea of the properties of the solution, is an entirely different matter.

“The idea was to try out thousands of such possibilities and, at each stage, to select by chance, by means of a random number with suitable probability, the fate or kind of event, to follow it in a line, so to speak, instead of considering all branches. After examining the possible histories of only a few thousand, one will have a good sample and an approximate answer to the problem. All one needed was to have the means of producing such sample histories. It so happened that computing machines were coming into existence, and here was something suitable for machine calculation.”

Von Neumann worked on the detail of the program and on 11th March 1947 sent a tentative computing sheet to Robert Richtmeyer. He wrote:-

“I cannot assert this with certainty yet, but it seems to me likely that the instructions given on the computing sheet do not exceed the logical capacity of the ENIAC.”

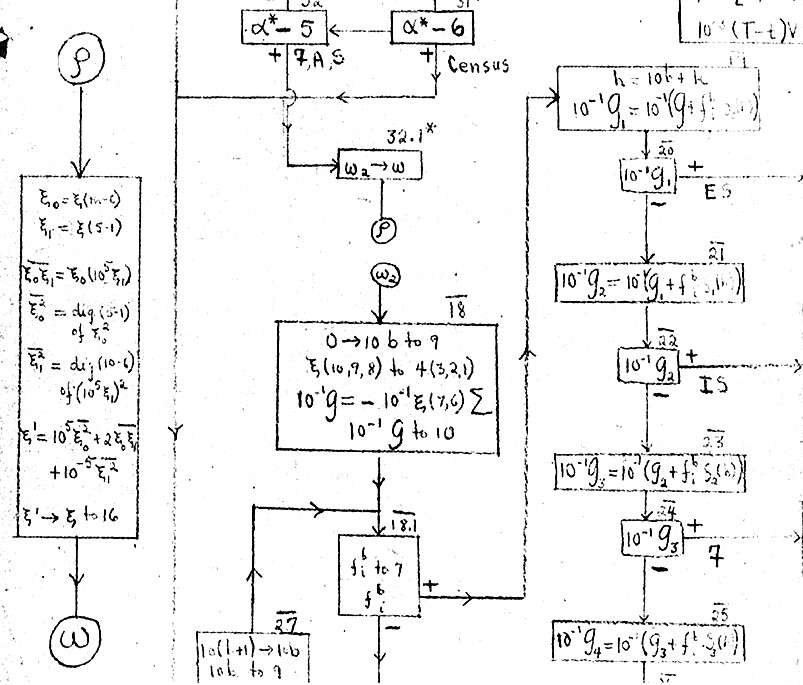

The situation was moved on and in December 1947 a draft flow diagram of the problem appears to have been prepared. About 10% of this is illustrated below. The subroutine is shown on the left.

|

In early 1948 the two streams of requirement for the work and ability to convert ENIAC to operate in stored program mode came together when Nick Metropolis from Los Alamos visited ENIAC. He wrote:-

“... on a visit to the ENIAC in early 1948, in preparation for its use after its move from Philadelphia, I was briefed by Homer Spence, the quiet but effective chief engineer, about some of their plans. He mentioned the construction of a new panel to augment one of the logical operations. It was a one-input-hundred-output matrix. It occurred to me that if this could be used to interpret the instruction pairs in the proposed control mode, then it would release a sufficiently large portion of the available control units to realize the new mode - perhaps. When I mentioned this to von Neumann, he asked whether I would be willing to take over the project, since Adele [Goldstine] had lost interest; so with the help of Klari von Neumann, plans were revised and completed and we undertook to implement them on the ENIAC, and in a fortnight this was achieved. Our set of problems - the first Monte Carlo - were run in the new mode.”

But when was this work actually undertaken? The clue to this is contained in a letter from von Neumann to Ulam dated 11th May 1948. After referring to some family matters, von Neumann wrote:-

“Nick [Metropolis] and Klari [von Neumann’s wife] finished at Aberdeen. It took 32 days (including Sundays) to put the new control system on the ENIAC, check it and the problem’s code, and getting the ENIAC into shape. The latter was probably ? of the time. Then the ENIAC ran for 10 days. It was producing 50% of these 10 x 16 hours, this includes two Sundays and all troubles. It could have probably continued on this basis as long as we wished. If a new problem was put on, the 32 days break-in period should contract to two to six days. It did 160 cycles (censures, 100 input cards each) on seven problems.”

So the work was clearly complete by early May.

It must be emphasised that the spring date for ENIAC first being used with converter code seems only to have been recognised quite recently. Previously an autumn date was generally accepted. This followed Goldstine who, in his 1993 book, The Computer from Pascal to von Neumann, stated that the first use was on 16th September 1948. But now the evidence for a March/April date seems overwhelming.

Goldstine had been the representative of the US Military in the building of ENIAC. By 1948 he had moved to the Institute of Advanced Study at Princeton. So it is difficult to know how he could have made the error of giving an autumn date for first use. But the work done in spring 1948 was classified for many years and it is entirely possible that Goldstine did not know of it. Also the autumn 1948 use was with a 100-order set. In the spring only a more limited 60- order set was used, and it is likely that the autumn use achieved some sort of publicity in conjunction with the introduction of the new order set.

Following its conversion ENIAC had a stored program capacity of up to 1,800 instructions (each required two digits) or in practice 1,200, leaving 104 stores available for fixed data (208 if five digits gave sufficient accuracy).

These instructions were, however, in a read-only store, and so could not individually be changed at run time. It may be argued that a program set up by hand switches was not like one read in from cards. But program sheets were used for ENIAC. These were then used in turn to set the hand switches. Is there really any difference between punching cards as against setting hand switches when each is done from a program sheet?

But, much more importantly, instructions could not be changed by the program at run time. So how did the capability of ENIAC compare with that of a “proper” stored program machine? The straightforward answer is that it made relatively little difference for most normal problems. As it was expressed in a somewhat later (1949) description of ENIAC programming in converter code:-

“The conditional transfer order gives ENIAC its power, since most computations involve more or less simple inductions which in turn depend upon decisions based on the sign of a number. This order enables the ENIAC to perform iterative processes such as stepwise integration and successive approximation.”

It is worth setting down some detail of the ENIAC conditional transfer order, since this also illustrates the relative complexity of programming ENIAC. Since there was an Aberdeen set and a Princeton set of orders (each of 60), the exact form of the order used in the 60-order code is not completely clear. So that taken from the 100-order code is used for illustration. The detailed effect of the conditional transfer was:-

This allows the program to discriminate on the sign of a number which has been calculated.

The operand store of ENIAC was tiny so array processing was of limited importance. There was no means of address modification moreover, which made an indexing loop as we now know it impossible. Nevertheless a crude form was possible by means of replicating instruction sequences in the relatively large instruction store, each replication addressing different store locations.

Of course all this seems extremely clumsy as compared to what can be achieved when instructions can be modified or changed at run time. But the point is that ENIAC did have very significant capability and versatility within its storage limitations.

But what in principle is the difference between a machine that can change the course of a program at run time and one that can both do this and change or modify existing instructions? The author is not competent to say, but this is surely an interesting question and needs to be addressed if this has not already been done.

Turning to the actual work done on ENIAC in March/early May 1948, the information is comprised in a description contained in the von Neumann archive in the Library of Congress. This manuscript is not attributed and is undated. But evidence from a note in the manuscript made by von Neumann indicates that it was written by Klari von Neumann.

From the context it is certainly a description of the spring 1948 ENIAC work, since it begins:-

“III Actual Technique

The use of the Eniac

Before describing the details of the actual running of the

sixfirst experimental problems based on the Monte-Carlo method, we would like to discuss here briefly the new and seemingly, more efficient method of operation which was used for the first time on the ENIAC.”

Some of the complexities are brought out in a further brief extract:-

“... If the card represented a fission neutron, the value of r was stored as read of [sic] the card, but a and v were chosen by certain random processes using a random number equidistributed between 0 and 1 to find a, and a random velocity within the fission spectrum for v (for explanation of the method used to obtain the random digits used throughout the problem, see page ... [sic]). The thus computed a and v values were then stored as in the census case. After this the fission and census neutrons path merge and t and i are stored in their assigned accumulators.

Next the number λ* is obtained by another random process: it is the (negative) logarithm of a random number which is equidistributed between 0 and 1. λ* is used to determine the place (distance) of the next collision: in this aspect it corresponds to the product of that distance with the total collision cross sections in the zone.

... We then found the material or materials with their appropriate densities, as present in the zone; fbi the density of material b in zone i. the total collision cross-reaction of the material present at the previously determined value r was then formed, ---- multiplied by fbi the material density of the zone.”

In addition to the use of an internally stored program, the spring 1948 ENIAC work contained three other firsts.

It required a routine to calculate random numbers. This is explained by Klari (the von Neumann middle square digits method):-

“An eight digit number was squared and the middle eight digits used as the new random numbers. This refresh process was repeated at each point where all or most of the eight previously stored random digits were already exhausted, i.e. used for some part in the computation.”

This routine was incorporated into a subroutine - a second first. Thirdly, the computation was a simulation one - the predecessor to so many others over the decades. Though not a first (this was Colossus) the program was one of substantial importance to national needs.

So much for the idea of what the program did, and when it was run, but does the date really matter?

It can be argued that there is a sense in which it does. This is because the Manchester Baby, the first computer to run a program contained in a read/write store, first operated on 21st June 1948. So a spring date for the ENIAC work pre-dates this, while a September one does not.

Even then, does it matter? ENIAC did not have a “true” stored program in the sense in which this has come to seem so vital. The argument for some level of importance may be illustrated by a comparison of the two machines:-

| Manchester Baby | ENIAC | |

| Date | 21st June 1948 | April/May 1948 |

| Program in read/write store | Yes | No |

| Program can be changed without making mechanical or electro-mechanical changes (criterion used in Williams and Kilburn first announcement) | Yes | Yes |

| Maximum size of program | 31 instructions | 1,200+ instructions |

| Input/output | Limited to hand operation | Punched cards |

| Substantial useful work | No | Yes |

| Specific features introduced | Use of backing storage. Subroutine. Simulation program. Random number generator. Work of national importance. | |

| If machine existed now, could it do any work of use | No | Yes |

Yes, ENIAC did not have a program where it was possible to change a particular instruction at run time. But how far did that reduce its ability to be seen as one of the important early steps in setting the stored program electronic digital computer on its way? How far could it have progressed without any additional facility?

In fact it would not have been too difficult to convert the ENIAC to give it the ability to change instructions at run time. This could have been done by use of an existing accumulator or better the introduction of an additional one. Then, instead of instructions being taken direct from the function tables, they would first have been added to the contents of this accumulator. However this facility almost certainly did not seem a priority.

It is pleasant to note that the distribution list for the 1949 ENIAC manual contains 21 organisations in government and major US universities. The first entry is The Chief of Ordnance, Washington (two copies). But the second is:

“British - send to ORDBT for distribution (of interest to Prof Hartree, Cambridge University) 5 copies”

Would it perhaps be appropriate for the British to consider reciprocating by accepting ENIAC, along with the Manchester Baby, EDSAC, the Mark 1 and Pilot ACE as an important part of the early computer story rather than being somewhat dismissed as having achieved little or nothing compared to these.

And ENIAC’s achievement was not just one of time priority. The work it did was itself at this time an important accomplishment and even more so for Klari, who had no education beyond High School.

Finally there are many questions to be answered. These include the precise instruction set used in spring 1948, as well as the coding and the results. How far were these results useful? How close are the relationships between the von Neumann tentative coding sheet of spring 1947, the December 1947 flow chart and the program actually run in spring 1948? Insofar as this information is still in a closed store at Los Alamos, can it be released as of historical interest? Finally, what are the theoretical limits of a computer with conditional branching as compared to one with this and the ability to change or modify individual instructions at run time? The Elgot and Robinson paper (1964) would seem only to deal with the question on the most theoretical level.

The author is aware of some limited funds which could be available to assist work in elucidating the answers to these questions. If they could be answered it would put the ENIAC into the same position as the Manchester Baby and EDSAC where first programs have been well researched.

Can it be right for this great achievement of ENIAC in stored program mode to remain hidden and unexplained?

Editor’s note: Crispin Rope remembers purchasing as a schoolboy the published version of Hartree’s inaugural lecture on the ENIAC in 1947. Later he was fortunate to be taught by both Hartree and Wilkes. His first experience of programming was on DEUCE (in binary backwards, hence his interest in ENIAC as well as in early British computers. His account of the ENIAC work in spring 1948 was published in the Annals of the History of Computing (29:4, 2007), this includes sources of his evidence for arguing that ENIAC’s first stored program operation was in spring rather than autumn of 1948. Crispin Rope can be contacted at Westerfield@btconnect.com. The author is particularly grateful to the editor for his great help in improving the text.

| Top | Previous | Next |

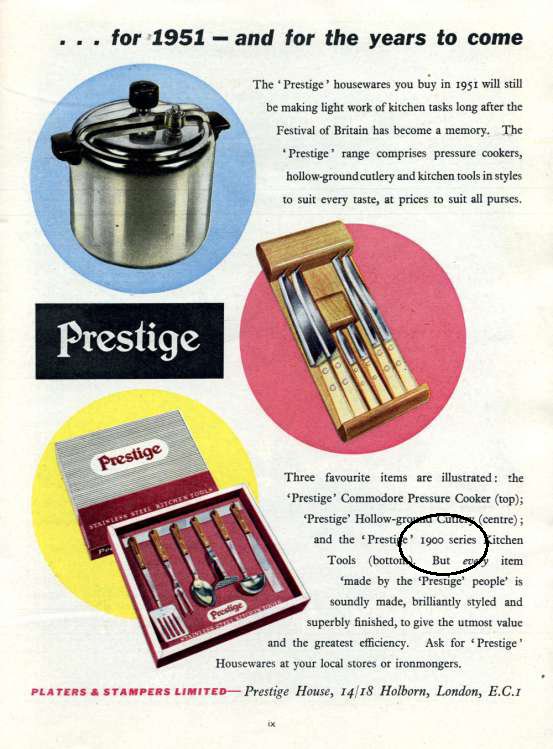

From the Festival of Britain Guide

|

Earlier than we thought perhaps?

| Top | Previous | Next |

| 17 Mar 2011 | Earthquake or Explosion - Computers in Seismology applied to Test-Ban Verification |

Alan Douglas |

| 21 Apr 2011 | The story of Elliott-Automation computers, 1946 - 1986 |

Simon Lavington |

| 19 May 2011 | Air Traffic Control systems | John Smith & others of TNMoC |

| 15 Sep 2011 | Centering the Computer in the Business of Banking: Barclays 1954-1974 |

Ian Martin |

| 20 Oct 2011 | A Tribute to Sir Maurice Wilkes | David Hartley |

| 17 Nov 2011 | tbs | |

| 15 Dec 2011 | Historic Computer Films | CCS Panel |

London meetings take place in the Fellows’ Library of the Science Museum, starting at 14:30. The entrance is in Exhibition Road, next to the exit from the tunnel from South Kensington Station, on the left as you come up the steps. For queries about London meetings please contact Roger Johnson at r.johnson@bcs.org.uk, or by post to Roger at Birkbeck College, Malet Street, London WC1E 7HX.

| 15 Mar 2011 | The Evolution of Radar Systems, Computers and Software |

Frank Barker |

| 20 Sep 2011 | System Architecture | John Eaton & Roger Poole |

North West Group meetings take place in the Conference Centre at MOSI - the Museum of Science and Industry in Manchester - usually starting at 17:30; tea is served from 17:00. For queries about Manchester meetings please contact Gordon Adshead at gordon@adshead.com.

Details are subject to change. Members wishing to attend any meeting are advised to check the events page on the Society website at www.computerconservationsociety.org for final details. Details will usually also be published on the BCS website (in the BCS events calendar) and in the Events Diary columns of Computing and Computer Weekly.

MOSI : Demonstrations of the replica Small-Scale Experimental Machine at the Museum of Science and Industry in Manchester are run each Tuesday between 12:00 and 14:00.

Bletchley Park : daily. Guided tours and exhibitions, price £10.00, or £8.00 for concessions (children under 12, free). Exhibition of wartime code-breaking equipment and procedures, including the replica Bombe and replica Colossus, plus tours of the wartime buildings. Go to www.bletchleypark.org.uk to check details of times and special events.

The National Museum of Computing : Thursday and Saturdays from 13:00. Entry to the Museum is included in the admission price for Bletchley Park. The Museum covers the development of computing from the wartime Colossus computer to the present day and from ICL mainframes to hand-held computers. See www.tnmoc.org for more details.

Science Museum :. Pegasus “in steam” days have been suspended for the time being. Please refer to the society website for updates.

North West Group contact detailsChairman Tom Hinchliffe: Tel: 01663 765040. |

CCS Web Site InformationThe Society has its own Web site, which is located at ccs.bcs.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. We also have an FTP site at ftp.cs.man.ac.uk/pub/CCS-Archive, where there is other material for downloading including simulators for historic machines. Please note that the latter URL is case-sensitive. |

| Top | Previous | Next |

| Chairman Dr David Hartley FBCS CEng | david.hartley@clare.cam.ac.uk |

| Secretary Kevin Murrell | kevin.murrell@tnmoc.org |

| Treasurer Dan Hayton | daniel@newcomen.demon.co.uk |

| Chairman, North West Group Tom Hinchliffe | tom.h@dsl.pipex.com |

| Secretary, North West Group Gordon Adshead | gordon@adshead.com |

| Editor, Resurrection Dik Leatherdale MBCS | dik@leatherdale.net |

| Web Site Editor Alan Thomson | alan.thomson@bcs.org |

| Archivist Hamish Carmichael FBCS | hamishc@globalnet.co.uk |

| Meetings Secretary Dr Roger Johnson FBCS | r.johnson@bcs.org.uk |

| Digital Archivist Professor Simon Lavington FBCS FIEE CEng | lavis@essex.ac.uk |

Museum Representatives | |

| Science Museum Dr Tilly Blyth | tilly.blyth@nmsi.ac.uk |

| MOSI Catherine Rushmore | c.rushmore@mosi.org.uk |

| Codes and Ciphers Heritage Trust Pete Chilvers | pete@pchilvers.plus.com |

Project Leaders | |

| Colossus Tony Sale Hon FBCS | tsale@qufaro.demon.co.uk |

| Elliott 401 & SSEM Chris Burton CEng FIEE FBCS | cpb@envex.demon.co.uk |

| Bombe John Harper Hon FBCS CEng MIEE | bombe@jharper.demon.co.uk |

| Elliott 803 Peter Onion | peter.onion@btinternet.com |

| Ferranti Pegasus Len Hewitt MBCS | leonard.hewitt@ntlworld.com |

| Software Conservation Dr Dave Holdsworth CEng Hon FBCS | ecldh@leeds.ac.uk |

| ICT 1301 Rod Brown | sayhi-torod@shedlandz.co.uk |

| Harwell Dekatron Computer Tony Frazer | tony.frazer@tnmoc.org |

| Our Computer Heritage Simon Lavington FBCS FIEE CEng | lavis@essex.ac.uk |

| DEC Kevin Murrell | kevin.murrell@tnmoc.org |

| Differential Analyser Dr Charles Lindsey | chl@clerew.man.ac.uk |

| ICL 2966 Delwyn Holroyd | delwyn@dsl.pipex.com |

| EDSAC TBA | |

Others | |

| Professor Martin Campbell-Kelly | m.campbell-kelly@warwick.ac.uk |

| Peter Holland | p.holland@talktalk.net |

| Dr Doron Swade CEng FBCS MBE | doron.swade@blueyonder.co.uk |

Readers who have general queries to put to the Society should address them to the Secretary: contact details are given elsewhere. Members who move house should notify Kevin Murrell of their new address to ensure that they continue to receive copies of Resurrection. Those who are also members of the BCS should note that the CCS membership is different from the BCS list and is therefore maintained separately.

| Top | Previous |

The Computer Conservation Society (CCS) is a co-operative venture between the British Computer Society, the Science Museum of London and the Museum of Science and Industry (MOSI) in Manchester.

The CCS was constituted in September 1989 as a Specialist Group of the British Computer Society (BCS). It thus is covered by the Royal Charter and charitable status of the BCS.

The aims of the CCS are to

Membership is open to anyone interested in computer conservation and the history of computing.

The CCS is funded and supported by voluntary subscriptions from members, a grant from the BCS, fees from corporate membership, donations, and by the free use of the facilities of both museums. Some charges may be made for publications and attendance at seminars and conferences.

There are a number of active Projects on specific computer restorations and early computer technologies and software. Younger people are especially encouraged to take part in order to achieve skills transfer.

| ||||||||||